One month ago, Tripo gave us Smart Mesh P1.0 — the fastest game-ready low-poly generator around. Now they’re back with the other half of the equation. H3.1 is their high-fidelity model, and it generates production-quality meshes in approximately 2 seconds. That’s not a typo.

The Story

Tripo AI officially launched H3.1 on April 11, 2026, backed by $50M in fresh funding from Alibaba and Baidu Ventures. The model tackles a problem that has haunted AI 3D generation since day one: speed was always achieved at the cost of quality. You got either fast-and-rough, or beautiful-and-slow.

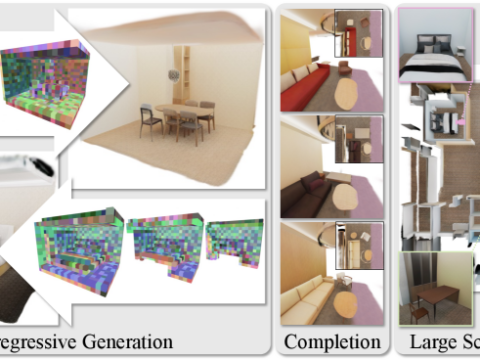

H3.1 is the quality half of that equation — and the architecture behind it is worth paying attention to. Instead of the traditional approach of converting geometry into token sequences (which bleeds spatial coherence), H3.1 represents vertices, edges, and polygon faces within a shared spatial feature field. This is native 3D diffusion: the model reasons directly in 3D space rather than lifting 2D features into an approximate 3D representation.

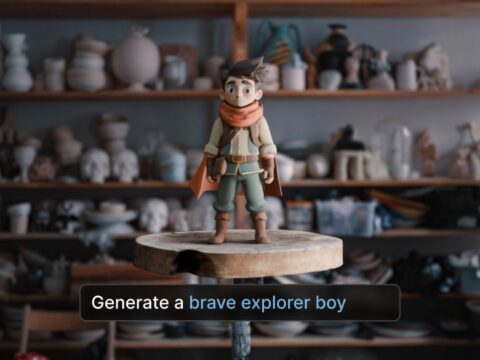

The result? Production-ready meshes in ~2 seconds — up to 100× faster than previous high-fidelity workflows. The model supports text prompts, single images, or up to 10 reference images including 4-view orthographic input. Characters, mechanical parts, complex organic forms, architectural elements — it handles the full range.

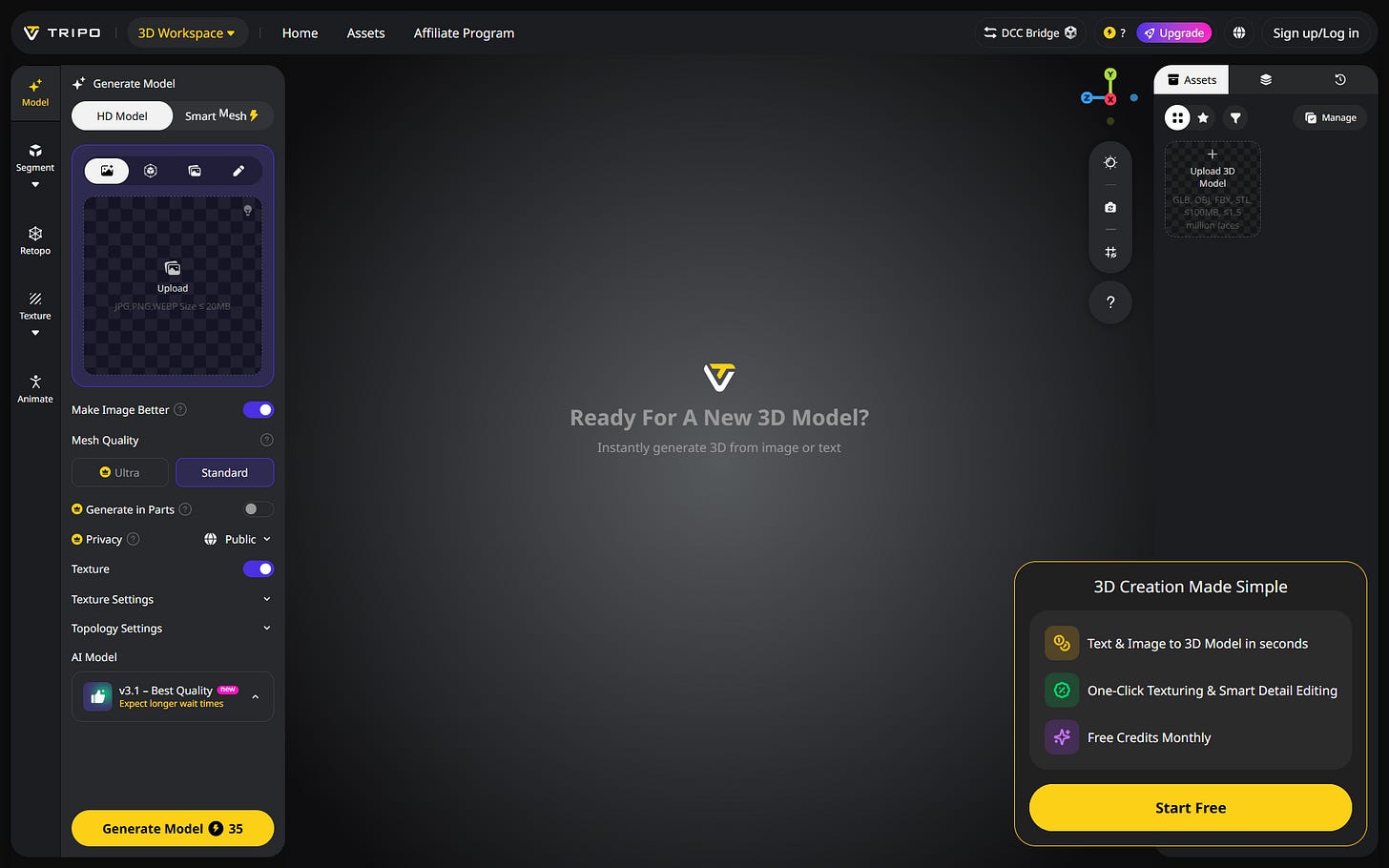

The dual-model strategy is smart design. Smart Mesh P1.0 (covered here last month) is your rapid-prototype tool: fast, low-poly, engine-ready. H3.1 is for hero assets that need to hold up in cinematic renders, high-resolution 3D prints, or product visualization. One platform, two modes, zero compromises on either end.

Why You Should Care

If you’ve been burned by AI 3D tools that produce great-looking thumbnails but fall apart under close inspection, H3.1 addresses exactly that failure mode. The geometry precision and texture consistency improvements are specifically designed to reduce — ideally eliminate — the post-processing round-trips that kill productivity.

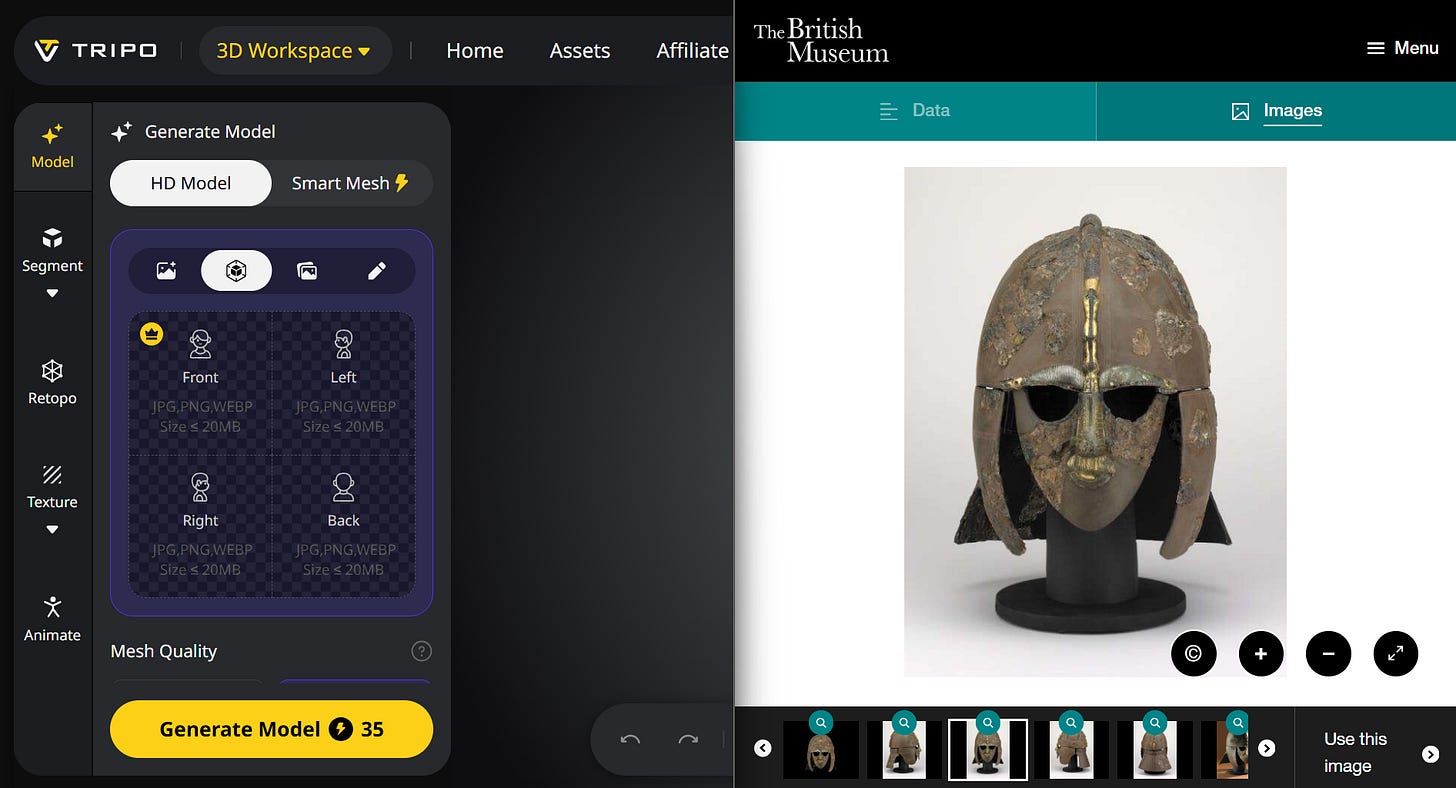

Real-world tests are encouraging. Independent reviewers tested H3.1 against complex reference subjects including cultural heritage objects: multi-view orthographic input produced 3D reconstructions with “a lot more geometric detail and much cleaner textures” versus earlier Tripo versions. The Sutton Hoo Helmet reconstruction (below) shows H3.1 handling intricate metalwork with fine surface detail — exactly the kind of asset that would have needed hours of manual sculpting six months ago.

For game developers, the implications are clear: you no longer have to choose between iteration speed and asset quality within a single platform. Concept fast with Smart Mesh, finish with H3.1. The end-to-end pipeline — generate → segment → retopo → texture → rig → animate — is all inside Tripo Studio.

The scale matters too: Tripo now serves 6.5M+ creators and 90,000 developers, with nearly 100 million 3D models generated to date. This isn’t a research demo — it’s infrastructure.

Try It

Tripo Studio is free to start (free credits monthly). H3.1 appears as “HD Model” in the generate panel — just toggle away from Smart Mesh. For maximum quality, use the 4-view orthographic input mode with clean reference images against a white background.

- Text-to-3D, single image, or up to 10 reference images

- Output formats: GLB, OBJ, FBX, STL — up to 1.5M faces

- Full pipeline: generate → segment → retopo → texture → rig → animate

- DCC Bridge for direct export to Blender, Cinema 4D, Maya

IK3D Lab Take

The native 3D diffusion architecture is the part that’s actually worth getting excited about. Operating directly in 3D space — representing geometry as vertices, edges, and faces in a shared spatial feature field — is the correct direction. Less approximation, better topological coherence, more predictable results. H3.1 is proof this approach works at production speed.

The $50M backing from Alibaba and Baidu Ventures signals that this isn’t a pivot or a side project — it’s a serious infrastructure play. Watch for H3.1’s geometry quality to become the baseline expectation that forces the rest of the field to catch up. Again.