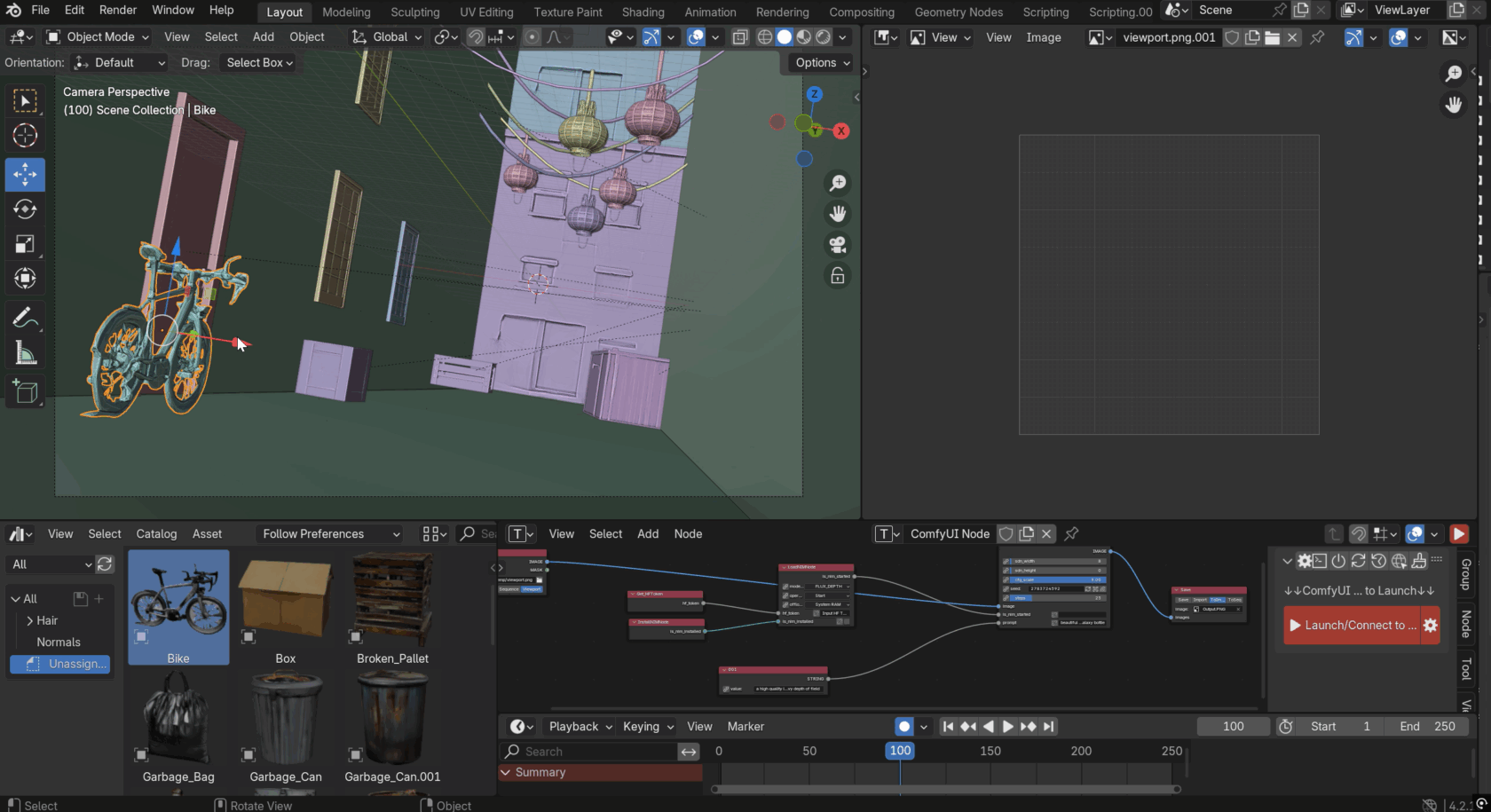

Type “cyberpunk night market.” Wait two minutes. Get twenty production-ready 3D assets, sorted, named, and dropped straight into Blender. No subscription, no cloud round-trips, no model-by-model prompt grinding. NVIDIA just shipped the prototyping pipeline 3D artists have been duct-taping together for the last two years — and made it free.

The Story

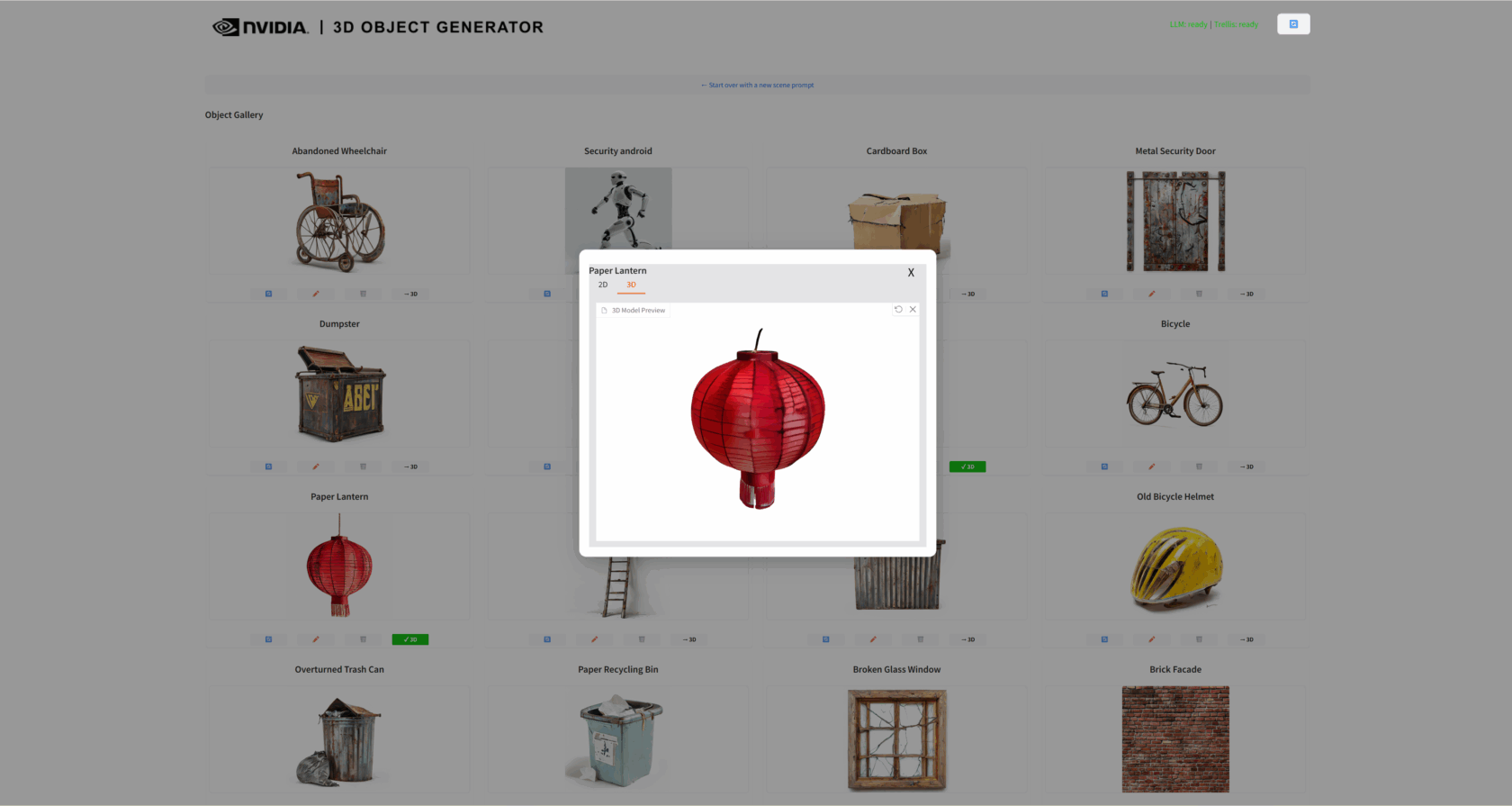

The NVIDIA AI Blueprint for 3D Object Generation is an open-source workflow (Apache 2.0) that chains three models into one Blender add-on. You describe a scene. A Llama 3.1 8B NIM brainstorms up to twenty objects that would belong in it. NVIDIA SANA generates a preview image for each. Then the Microsoft TRELLIS NIM microservice converts every preview into a real, textured 3D mesh and pipes it into your viewport.

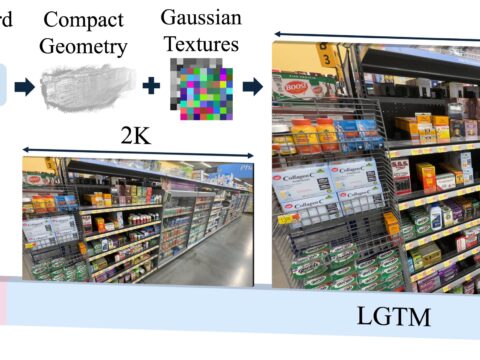

TRELLIS is the secret weapon here. Microsoft Research released it as one of the strongest open image-to-3D models of the last cycle — high-fidelity geometry, complex shapes, real textures. NVIDIA wrapped it into a NIM microservice optimized with PyTorch on the latest RTX silicon, and the result is a reported 20% speedup, around six seconds shaved off every object on an RTX 5090. Multiply that across twenty assets and a long iteration day, and the math gets serious fast.

The interaction loop is what sells it. You don’t get a single black-box dump. Each preview image is editable: regenerate the ones you don’t like, tweak the prompts, delete the dud, run the next pass. Only the survivors get promoted to full 3D. That’s the part most one-shot text-to-3D tools refuse to give you, and it’s exactly the part a working artist needs.

Why You Should Care

Prototyping a 3D scene is the most expensive cheap thing in the industry. You need a dozen filler props before you can even tell whether the layout works — crates, lanterns, signage, foliage, bottles, debris — and modeling each one is a tax on the actual creative work. Asset stores half-solve it but leave you fighting style consistency. Single-shot generators give you one impressive hero asset and zero ecosystem around it.

The Blueprint flips this. The LLM stage doesn’t just generate things — it generates a coherent set of things that share a scene’s logic. Twenty pieces of “abandoned arcade” feel like they came from the same arcade. That’s the unlock. For game designers blocking out a level, architects populating a render, indie animators staging a shot, this turns a two-day prop pass into an afternoon.

Try It / Follow Them

Bad news first: this one has teeth on the hardware side. You’ll need an RTX 5090, 5080, 4090, 4080, or RTX 6000 Ada — minimum 16GB VRAM, 48GB system RAM recommended, ~50GB for the model weights, Windows 10/11, CUDA 12.8. The PowerShell installer handles Git LFS, Miniconda, dependencies, and HuggingFace downloads in 30 to 60 minutes.

- Repo: github.com/NVIDIA-AI-Blueprints/3d-object-generation

- Blueprint page: build.nvidia.com/nvidia/object-generation-3d

- Background read: NVIDIA RTX AI Garage announcement

- TRELLIS research: Microsoft Research TRELLIS repo

IK3D Lab Take

This is the first text-to-3D pipeline that respects how artists actually work. Most of the field is still chasing the “one prompt, one perfect asset” trophy. NVIDIA went the other direction — many objects, planned together, editable midstream, exported into the tool you already live in. It’s not the most photoreal generator on the market, and the hardware bar is real, but the workflow shape is the future. Expect every other player — Tripo, Rodin, Meshy, Hunyuan — to copy the multi-asset, scene-aware loop within a quarter.

The deeper signal: NVIDIA is no longer just selling shovels. With NIM microservices wrapping the best open models (Microsoft’s TRELLIS, here), they’re shipping the entire mine. If you’re an indie 3D artist with a 4090 sitting on your desk, this is the closest you’ll get this year to a one-person studio pipeline.