Forty shots. Two actors. One 192-camera volume. No traditional renderer. Framestore just shipped the first use of 4D Gaussian Splatting in a major Hollywood feature film — and the implications for every 3D artist, VFX supe, and game dev reading this are massive.

The Story

In James Gunn’s Superman (2025), there’s a scene where Clark Kent discovers holographic messages left by his Kryptonian parents — corrupted transmissions, degraded across light years, from people long dead. Emotionally, it had to be real. Technically, it needed to be fully controllable in post. Traditional VFX couldn’t deliver both. 4D Gaussian Splatting could.

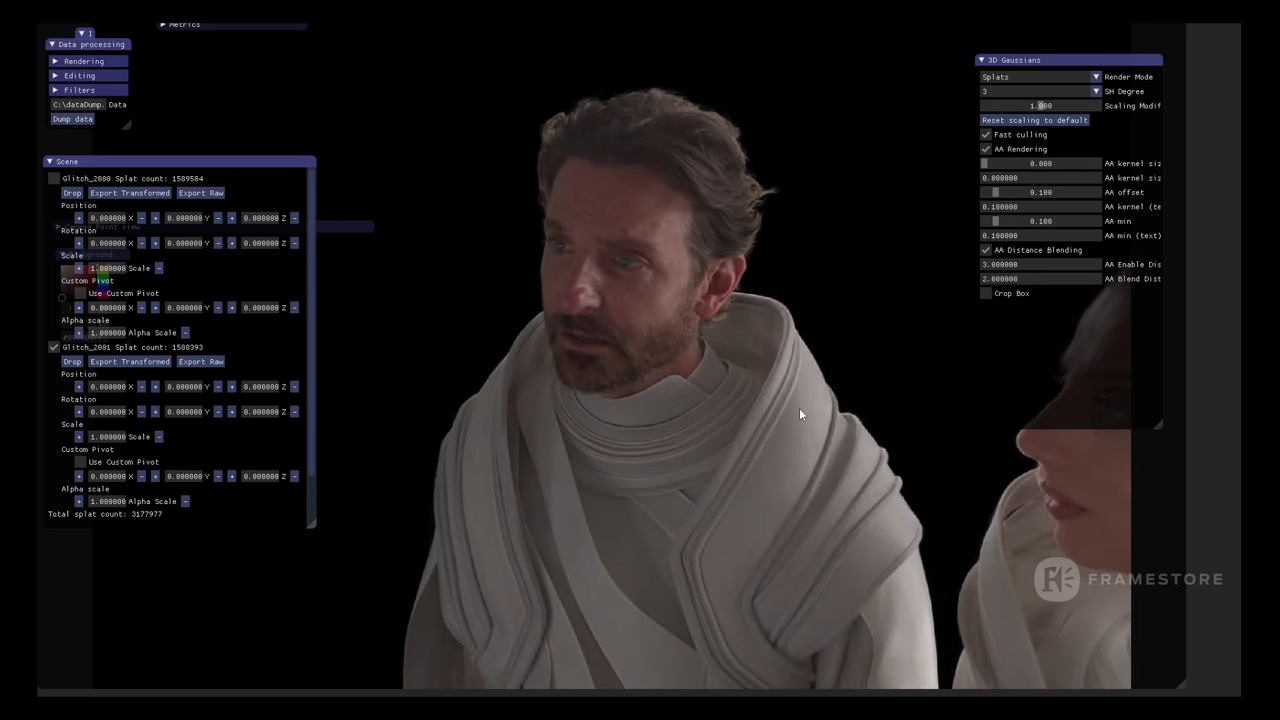

Framestore Montreal partnered with Infinite Realities — a two-person volumetric capture company in Ipswich, UK — to capture Bradley Cooper and Angela Sarafyan simultaneously on their Deus Capture Stage. 192 cameras. Spherical array. 24 fps. A single two-minute take. The first time this stage had ever captured two actors at once.

The result was hundreds of gigabytes of per-frame PLY files — no mesh, no UVs, no topology. Just dynamic Gaussian splat sequences, trained over 5+ days of GPU compute, encoding every photon hitting every part of their bodies from every direction simultaneously. Living photography.

At Framestore, CG Supervisor Kevin Sears and his team loaded those PLY sequences into Houdini via the GSOPs plugin — treating the splat data as point-cloud geometry. The corruption effects (hologram stutters, jitter, misalignment) were applied by manipulating the raw splat points with noise fields, directional offsets, and segment-level instability. Not 2D compositing tricks layered on top. Actual data distortion, baked into the volumetric render itself.

Rendering bypassed path-tracing entirely — the pipeline used a custom SIBR Viewer with improved anti-aliasing, outputting 4K PNG sequences with alpha. Final compositing happened in Nuke via Irrealix’s native splat plugin for depth extraction. The full Houdini → SIBR → Nuke pipeline was built from scratch, by a small team, for a $200M studio tentpole.

“Instead of pre-planning motion control with moving cameras that can be sensitive to misalignment and flexibility, we can now re-film their performance in post with any context the director would like.”

Kevin Sears, CG Supervisor, Framestore Montreal

Why You Should Care

This isn’t just a cool tech demo. This is a paradigm shift in how performances can be captured and used.

- Directors get performance AND flexibility. James Gunn explicitly wanted real human performances — not digital doubles, not motion capture retargeted to CG. But he also needed full camera freedom in post. 4D GS delivered both. That’s the killer combination no other technique offers.

- The pipeline is real and proven. PLY sequences → Houdini (GSOPs) → SIBR Viewer → Nuke (Irrealix plugin). Not a research paper. Not a proof of concept. 40 shipped shots in a blockbuster.

- Two people built the capture side. Infinite Realities is a two-person company. The barrier to entry for volumetric capture at this quality level is collapsing.

- The toolchain is converging. OTOY’s Octane Render is adding native splat rendering in 2026. GSOPs in Houdini is production-ready. Nuke plugins exist. Every major DCC is adding support. The momentum is undeniable.

- Re-cutting performances after the shoot. Because the full two-minute take exists as volumetric data, editors can choose any moment, from any angle, at any focal length — months after the actors walked off set. This rewrites the economics of reshoot decisions.

Kevin Sears put it perfectly: “It was like theatre in the round. We could shoot around their heads, glitch the data for story beats, and still retain the exact performance of the actors.”

Try It / Follow Them

Ready to dig in? Here’s where to start:

- befores & afters breakdown — The most technical writeup, with full pipeline details from Kevin Sears.

- The Art of VFX interview — Extended Q&A with Stéphane Nazé, Loïc Mireault, and Kevin Sears.

- RadianceFields.com — Follow Michael Rubloff’s ongoing coverage of GS in production.

- Framestore’s Superman case study — Full breakdown of all the film’s VFX challenges, not just the splat work.

- GSOPs for Houdini — The plugin used by Framestore to treat PLY splat sequences as Houdini geometry. Search for it on Houdini’s package ecosystem.

- fxguide podcast — Deep audio interview with the Framestore team covering this and every other effect in the film.

IK3D Lab Take

For years, Gaussian Splatting lived in the research lab, in the hobbyist’s garage, in the experimental pre-viz reel. The Superman pipeline is the moment it graduates. Not because the technology changed — it’s been capable of this for a while — but because a major studio, a major director, and a major VFX house trusted it enough to ship it in 40 shots of a $200M film.

The tools are there. Houdini speaks PLY. Nuke has splat plugins. OTOY is adding native rendering. The Deus Capture Stage delivers 192-camera PLY sequences. What’s changing is that artists are now building the workflows to connect these dots — and shipping them.

If you’re a 3D artist or VFX pipeline TD, the time to experiment with Gaussian Splatting is now. Not next year. The studios are already there. The question is whether your workflow is catching up.