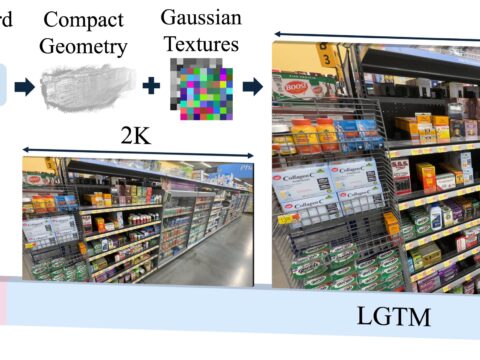

ByteDance just dropped Seed3D 2.0 on April 23, and quietly torched two of the biggest reasons studios still don’t trust AI-generated 3D: soft edges and fake-looking materials. The model claims SOTA on both geometry and texture, ships through a public API on Volcano Engine, and is built to plug straight into Isaac Sim for robotics. This is no demo reel — it’s positioned as production glass.

The Story

If you’ve spent any time wrangling Tripo, Hunyuan3D or Trellis output, you know the dirty secret of AI 3D generation: the silhouette looks great in the thumbnail, then you bring the asset into Blender and every chamfer is a soft potato, every thin wall is a blob, and every “metal” surface looks like it was painted by someone who has never touched a real metal object. That’s the wall the entire industry has been hitting since 2024.

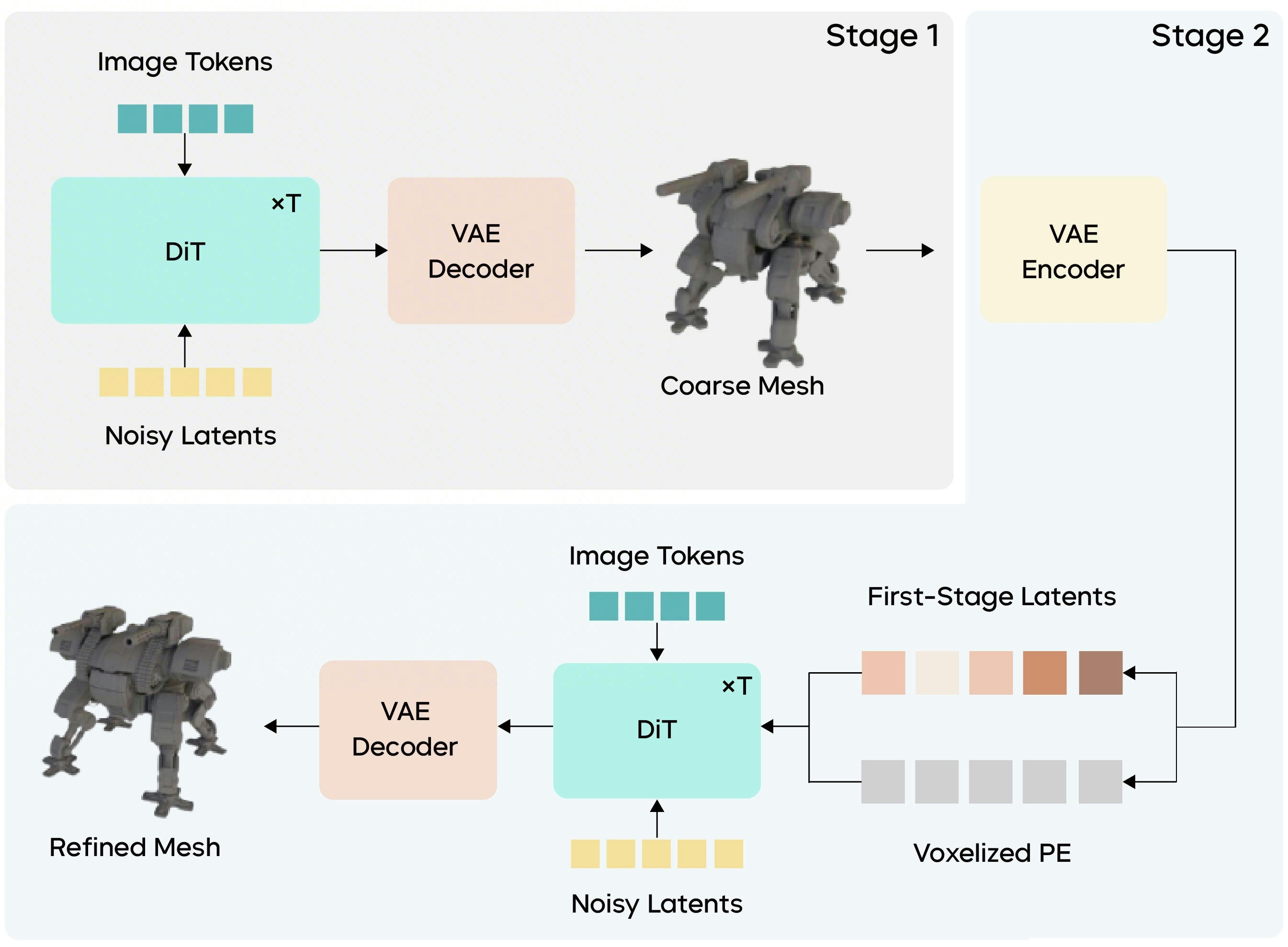

Seed3D 2.0’s answer is architectural, not cosmetic. Geometry is generated by a two-stage DiT they call coarse-to-fine: a first pass nails the global structure, then a second pass uses that result as a local-aware prior — geometric anchors plus voxelized positional encoding — to recover sharp edges, screw threads, thin metal sheets, the stuff the previous generation of models smeared into mush. They also rebuilt the VAE so the latent reconstruction stops eating fine detail before the diffusion process even starts.

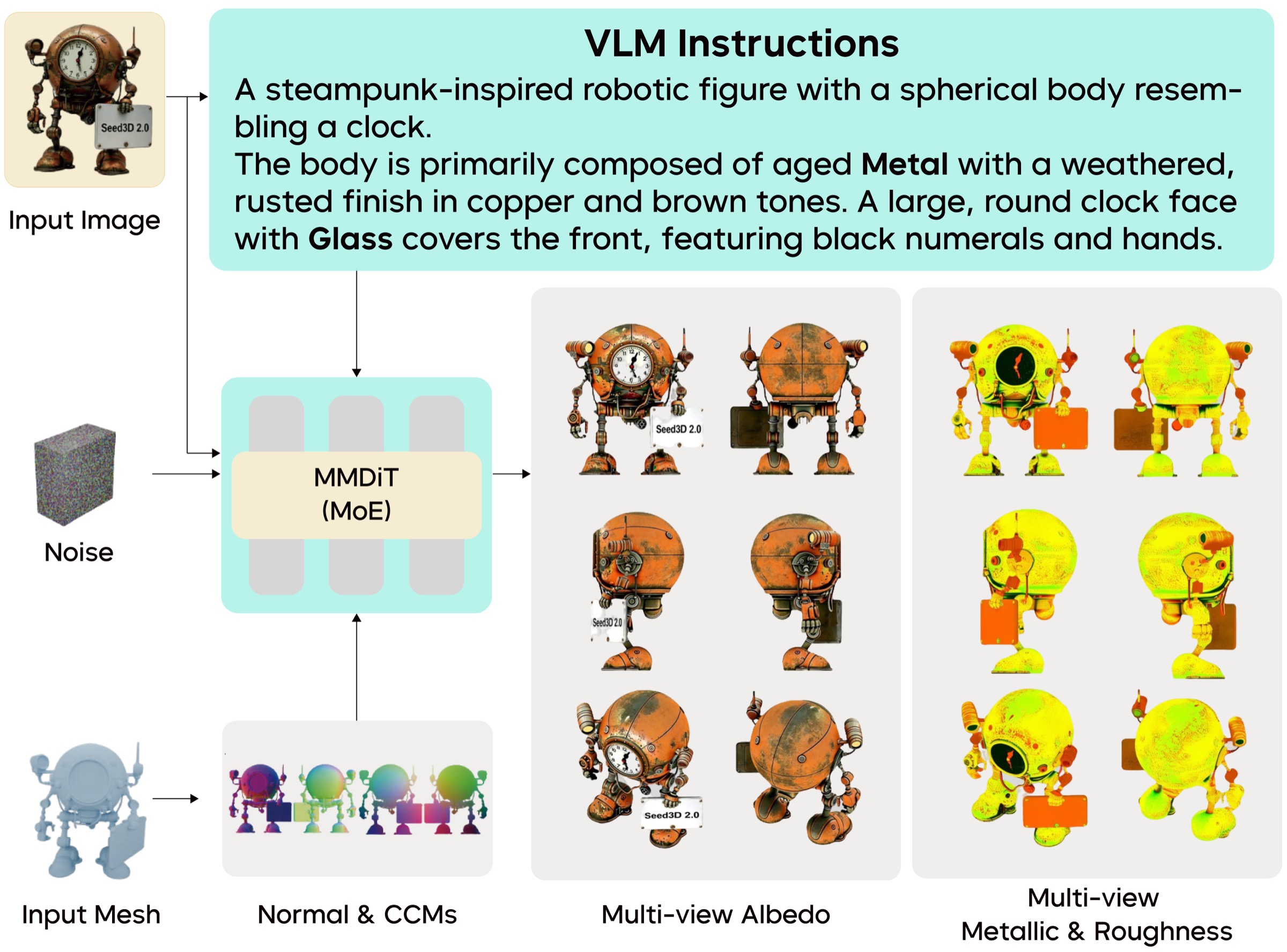

The texture pipeline is the other half of the upgrade. The old cascaded approach — diffuse here, normal there, roughness somewhere else, hope they line up — is gone. Seed3D 2.0 generates a unified PBR stack in one shot: base color, metallic, roughness, normal, all physically consistent. It’s powered by a Mixture of Experts setup for the high-frequency detail and a VLM that injects prior knowledge about what materials actually are (“this is brushed aluminum, not painted aluminum”), which is what stops the texture model from inventing physically impossible surfaces.

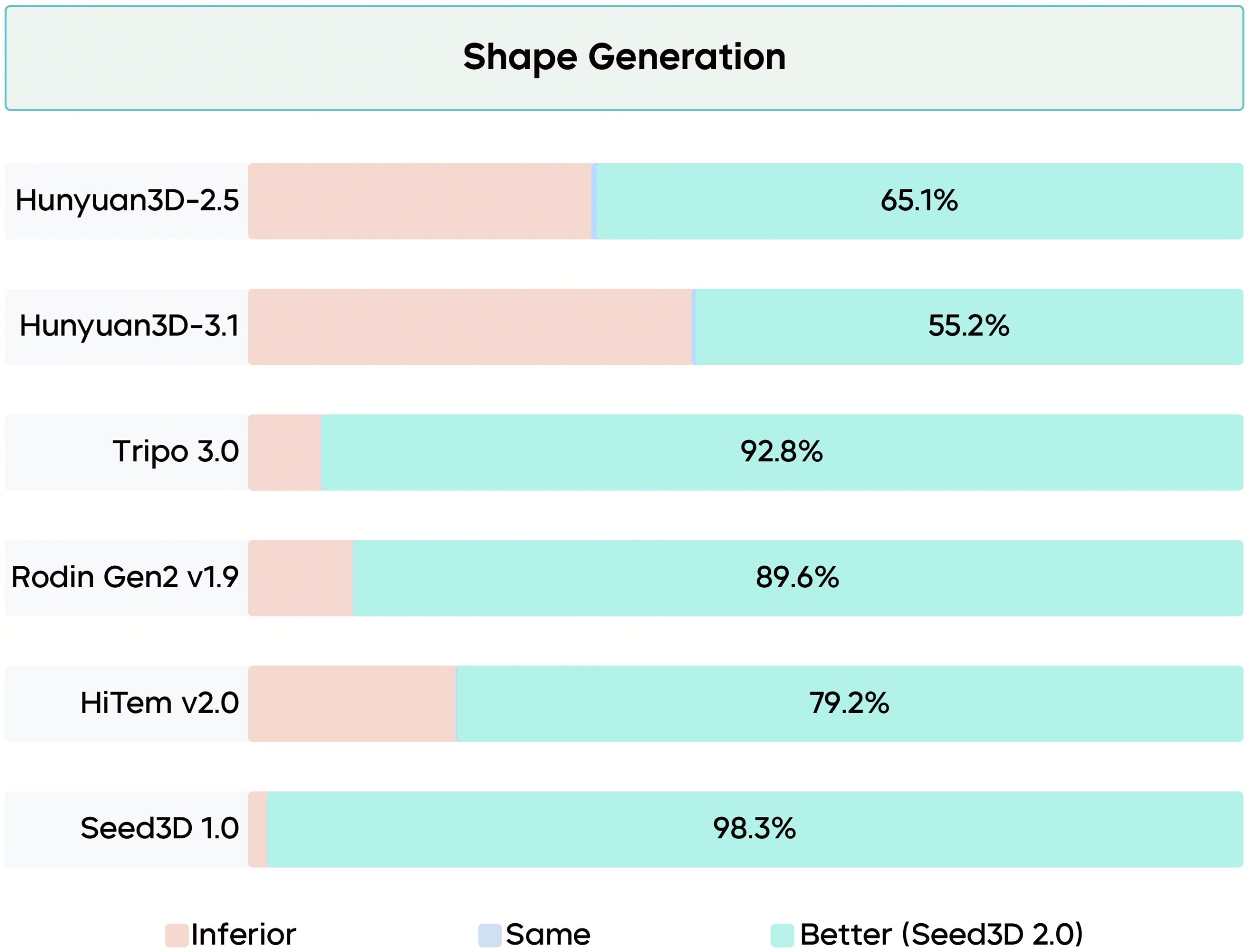

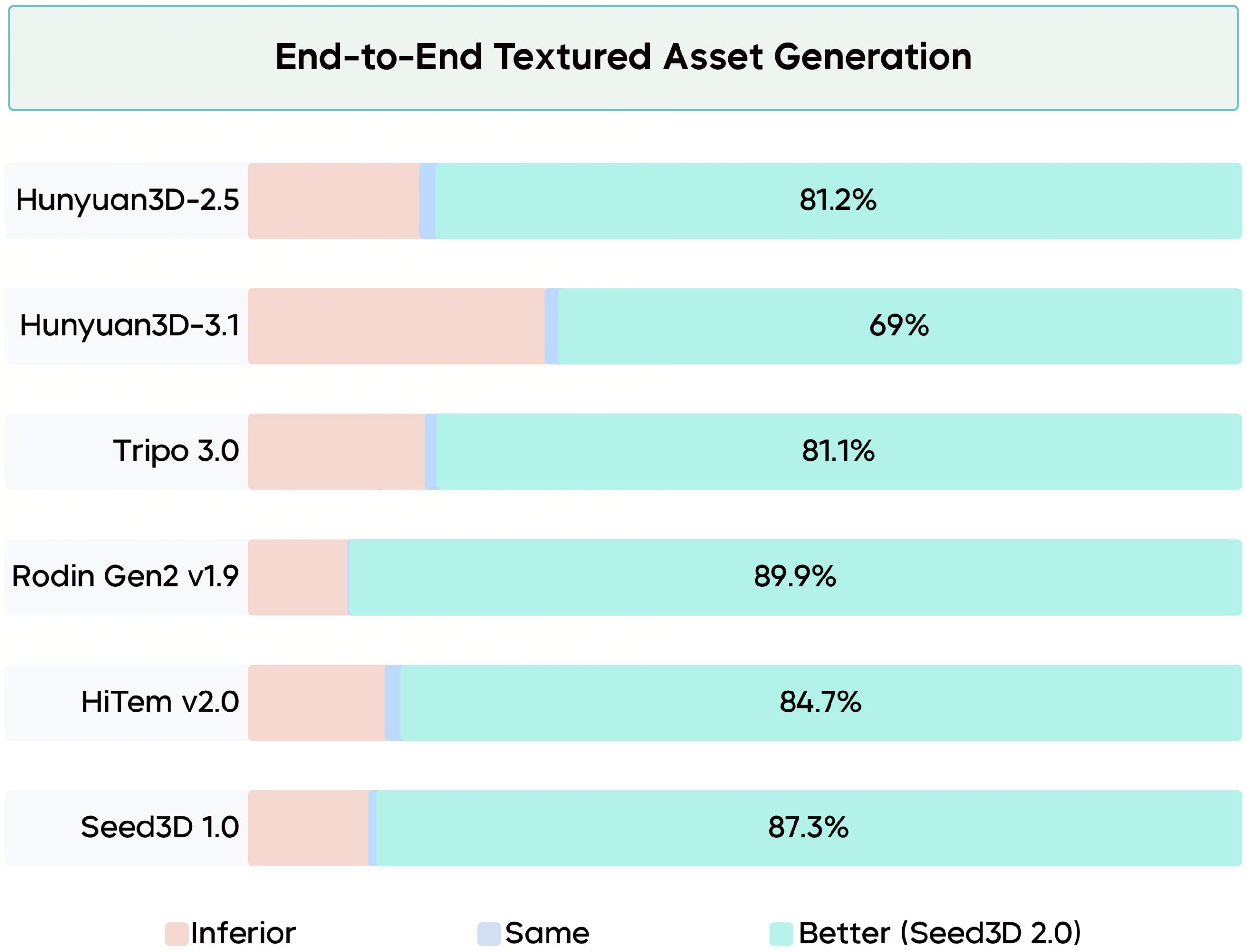

The numbers ByteDance is putting on the table: in human evaluations across 200 test cases with 60 professional reviewers, Seed3D 2.0 took an 80%+ preference rate on geometry and 69%+ on texture against current mainstream models. Take vendor numbers with the usual salt — but a 60-pro panel is a serious bar to clear.

Why You Should Care

Three things make this release matter beyond “another 3D model”:

- Part-level decomposition is built in. Seed3D-PartSeg ships alongside it, so the output isn’t a frozen single-mesh blob — it’s separable components ready for rigging, swapping, or instancing.

- Articulated modeling with URDF export. The model can estimate joints and dump straight to URDF, the standard robotics description format. ByteDance explicitly mentions Isaac Sim compatibility — meaning this isn’t just a hero-asset generator, it’s a simulation asset generator.

- Scene composition from text and multi-view. Not just objects: full layouts. That’s the bridge from “make me a chair” to “make me the room around it.”

The robotics angle is the spicy one. Every embodied-AI lab on Earth is starving for synthetic, articulated, physically valid 3D scenes. If Seed3D 2.0 can pump out URDF-ready assets at scale, it doesn’t just compete with Tripo and Meshy on the asset market — it competes with NVIDIA’s whole synthetic-data pipeline for robot training.

Try It / Follow Them

- Project page: seed.bytedance.com/seed3d_2.0

- Release blog (English): Seed3D 2.0 Released — Higher Precision and Greater Usability

- API access: Volcano Ark Experience Center → Vision Model → 3D Generation → Doubao-Seed3D-2.0

- Previous release for comparison: Seed3D 1.0

IK3D Lab Take

The race for “production-ready AI 3D” has had a clear pattern: every six months a new model claims to have solved it, and every six months working artists go “yeah, almost.” Seed3D 2.0 looks like the first one where the architecture choices map cleanly to the actual failure modes — coarse-to-fine for the silhouette/detail tradeoff, unified PBR with a VLM grounding for material plausibility. That’s the right diagnosis even if the cure is overhyped.

The catch: it’s API-only on Volcano Engine, no weights, no GitHub. So you’re trusting ByteDance’s pipeline and pricing to ship anything serious. For now, this is a tool you rent, not one you own. The open-source crew (Hunyuan3D, Trellis, Step1X-3D) still has the moat where you need offline reproducibility — but they’re now objectively a notch behind on geometry and material quality. Watch the next 60 days: this is exactly the kind of pressure that triggers an open-weights response.

If you generate 3D assets for work, give the API a serious test on your hardest case — the one with chamfers, thin metal, and a mix of dielectric and metallic materials. That’s where the previous generation of models fell apart. If Seed3D 2.0 holds up there, the conversation about “AI 3D for production” just changed.