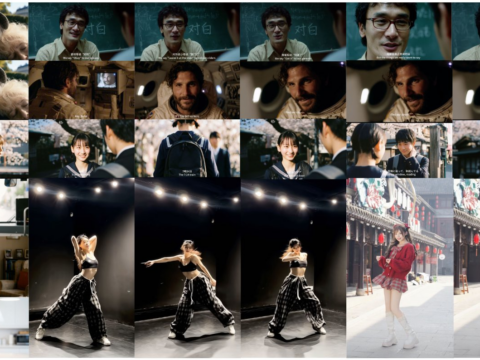

There’s a quiet crisis in AI-generated creative work. When 50,000 designers all prompt the same models, the outputs converge. Everything starts looking like… AI. FLORA’s new agent FAUNA is a direct counter-attack — an AI built explicitly to amplify your taste, not average it away.

The Story: From Canvas to Agent

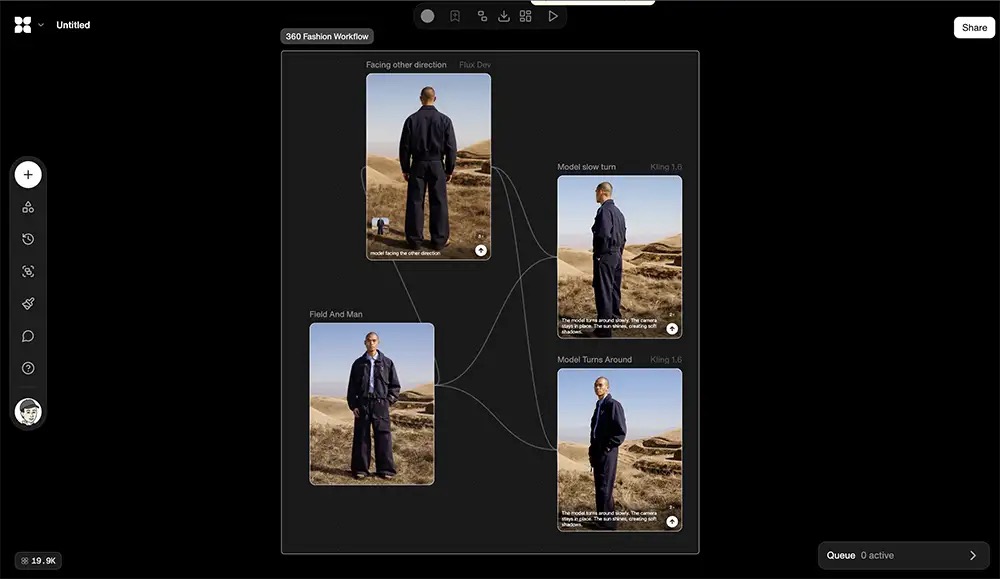

FLORA launched publicly in February 2025. The concept: an infinite, node-based canvas that aggregates 60+ AI models (image, video, SVG, text, upscaling) into one workspace. Think Figma meets ComfyUI meets a professional AI engine — except without the Python setup headache. You drag in a source image, connect it to a generation model, chain outputs to editing nodes, branch into style variants, compare side-by-side. The full genealogy of every piece of work lives on the canvas.

The models inside aren’t yesterday’s tools: Flux 2 Max, Imagen 4, Sora 2 Pro, Veo 3.1, Runway Gen-4 Turbo, GPT Image, Ideogram 3.0, Midjourney-quality Seedream series, Topaz upscaling — all in one place, all chainable. $52M in funding later (Redpoint Ventures Series A in January 2026), founder Weber Wong launched what the platform has been building toward: FAUNA.

FAUNA is the agent layer on top of the canvas. It has, as the team describes it, “hands.” Tell FAUNA what you need in plain language — “build me three campaign moodboards for a luxury sneaker brand, each in a different color direction” — and it constructs the entire multi-model workflow live on the canvas in front of you, step by step. It picks the right models, writes the prompts, connects the nodes, runs the generations, and organizes the results. You can watch it think. You can stop it, redirect it, branch it.

FAUNA has three modes: Assist (shows planned nodes + credit cost, waits for your approval), Auto (executes immediately), and Plan (ideates workflow structure without running anything). It can run up to 50 image, video, and text nodes in parallel. It can search the web and pull Unsplash references into your canvas as nodes mid-session. And crucially — when the session ends, every decision FAUNA made is still visible on the canvas. The thinking behind the work is as permanent as the work itself.

Weber Wong didn’t come from a design background. He came from Evercore and Menlo Ventures. But the insight was sharp: “Current AI tools are built by non-creatives, for other non-creatives to feel creative.” His founding experiment — a webcam-connected generative AI art installation called TINTED MIRROR — convinced him that the real problem wasn’t model quality. It was the interface between professional creative judgment and model access. So he dropped out of NYU’s Interactive Telecommunications Program and built FLORA.

Why This Matters: The Homogenization Problem Is Real

Wong articulates three groups of creatives in the AI era:

- Group 1: Refusing AI entirely. Protecting craft. Increasingly at a speed disadvantage.

- Group 2: Using AI as a raw generator. Faster output, but it looks like everyone else’s output — because everyone is drawing from the same statistical average of the same training data.

- Group 3: Using AI as a multiplier of their taste and judgment. Not replacing creative vision — executing it at higher speed without sacrificing distinctiveness.

FAUNA is built exclusively for Group 3.

The mechanism: Style DNA. Upload 15-20 reference images — your portfolio work, a client’s brand imagery, a specific visual language you’re developing — and FLORA trains a Style Node on them. Attach that Style Node to any generation pipeline and every output conforms to that learned aesthetic. Style DNA kits are shareable across teams, so an entire agency can work in the same visual language without enforcing it manually. Beyond static references, FAUNA learns from your creative history over time — which models you favor for which tasks, your prompting patterns, what visual territory you gravitate toward. Future generations are informed by accumulated profile, not generic statistical average.

The clients in production say something significant. Netflix. Nike. Pentagram. Wonder Studios. Lionsgate. Base Design. These aren’t hobbyists exploring the tool — these are institutions with established visual identities that need AI to extend their brand language, not dilute it. Pentagram’s brand system workflow is available as a public “Technique” (pre-built reusable workflow) for other FLORA users to adapt.

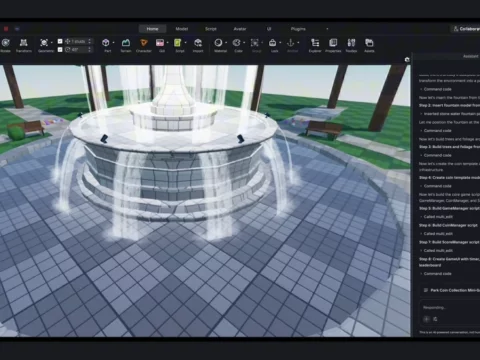

What It Means for 3D / Architecture / Game Dev

This isn’t a pure-play image generation tool. For the IK3D audience specifically:

- 3D artists: Generate concept art and material references in multiple stylistic directions simultaneously. Lock your project’s visual language via Style DNA so all your AI-assisted references stay on-brand across a production cycle. Use branching nodes to test 5 lighting conditions on the same base render without re-running everything from scratch.

- Architects: The Organic Composition Engine (FLORA’s compositing intelligence) understands depth, weight, and spatial placement — it’s specifically useful for interior visualization and product-in-space renderings. Generate exterior and interior concepts in a client’s established style using Style DNA trained on their existing portfolio.

- Game developers: Build character and environment concept batch pipelines. Generate large volumes of consistent concept assets with world-building visual cohesion. FLORA is actively targeting game design studios, and the procedural-workflow logic of the node canvas will feel native to anyone who’s worked with shader graphs or visual scripting.

- VFX / film: Wonder Studios is in production use — their pre-viz workflow is shareable as a Technique. Video generation models (Veo 3.1, Sora 2 Pro, Kling 3.0) are integrated directly into the same canvas alongside image generation.

Try It

Access: florafauna.ai — no credit card required for the free tier.

Free tier: 1,000 starter credits — approximately 50 images, 5 videos, 500 text generations. Full model access, FAUNA included.

Paid plans: Starter at $18/month (20,000 credits, ~1,000 images), Studio at $54/month (60,000 credits), Scale at $200/month (250,000 credits). All plans include unlimited team seats — no per-seat fees.

Techniques: Browse the pre-built workflows from Pentagram, Netflix, Base Design, Wonder Studios. Adapt them as your own starting point. This is the fastest onramp for production creative teams.

For FAUNA specifically: Start in Assist mode (default). Describe what you want. Watch it build the workflow. Click “View Steps” on any FAUNA action to see full reasoning. This is how you learn the system while also getting work done.

IK3D Lab Take

The node canvas philosophy maps directly onto how skilled 3D artists already think: procedural, non-destructive, iterative. If you’re comfortable in shader graphs, Houdini VOPs, or ComfyUI — the FLORA canvas will feel like home, not a learning curve. The difference is that 60+ frontier AI models are the nodes, not shaders.

The Style DNA system is potentially the most valuable feature for professional work. The homogenization problem Wong describes isn’t theoretical — it’s visible in client presentations across the industry right now. When everyone’s concepts look like they came from the same prompt on the same model, it signals that the tool is doing the thinking, not the designer. Style DNA is a direct structural solution to that, not a prompt trick.

The Techniques library — production workflows from studios like Pentagram and Wonder Studios — is the onramp worth exploring immediately. These aren’t tutorials. They’re production-tested pipelines adapted from real creative work. For 3D and architecture studios looking to integrate AI into existing workflows without rebuilding from scratch, this is a serious starting point.

One thing to watch: FAUNA’s multi-agent expansion is planned (multiple agents running in parallel). If that ships well, FLORA becomes less a tool and more an infrastructure layer for AI-augmented creative production. $52M and clients like Netflix suggest they’re building for exactly that.

“This isn’t about helping people who can’t design. It’s about making people who already know what good looks like unstoppable.” — Weber Wong, FLORA CEO