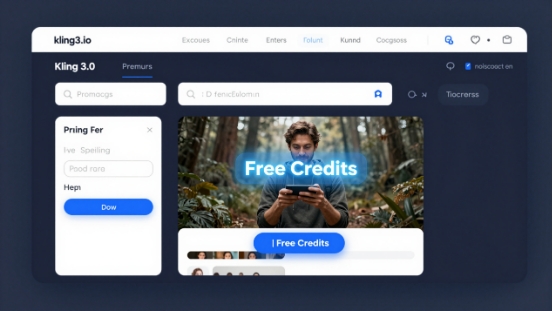

OpenAI is shutting down Sora on April 26, 2026 — that’s six days from now. The platform that promised to “revolutionize video creation” turned out to be a money pit nobody was using. Disney pulled a $1 billion partnership. Compute costs were bleeding OpenAI dry. And as Sora fades, one tool is standing in the spotlight: Kling 3.0 from Kuaishou, already sitting at the top of every benchmark with a unified video + audio + motion engine that makes Sora look like an expensive proof-of-concept.

The Story

Kling 3.0 launched February 5, 2026. Built on a Multi-modal Visual Language (MVL) architecture, it treats text, images, audio, and video as first-class citizens in a single pipeline. No separate model for audio. No separate model for images. One system that understands all of it simultaneously.

Here are the specs that actually matter:

- Native 4K at 30fps — not upscaled, generated at resolution

- Up to 15 seconds per clip, with multi-shot chaining across scenes

- Native audio — dialogue, sound effects, and music synchronized from generation, not added in post

- Director-level camera controls — pan, tilt, zoom, dolly, rack focus built into prompting

- Motion Control — extract motion from any 3-30 second reference video and apply it to a new character or scene

- 7-in-1 multimodal editor — object and background adjustments, element consistency control

- Physics simulation — gravity, balance, deformation, collision, and inertia baked in

The result: a benchmark leader with an ELO score of 1243 — #1 in the AI video landscape as of April 2026, at an entry price of $6.99/month commercial.

Why You Should Care

For 3D artists and animators, Kling 3.0’s Motion Control feature is a mocap studio in your browser. You shoot yourself (or any human) doing a movement on your phone. Kling extracts the skeletal motion, timing, and physics. You apply it to a photorealistic character or 3D reference. The result is production-quality animation reference that previously required expensive motion capture hardware.

For architects and world builders, the multi-shot capability with director-level camera controls turns textual descriptions into cinematic walkthroughs. Pan across a facade. Dolly into a courtyard. Rack focus on a material detail. These aren’t random AI video outputs — they’re directed sequences you compose with professional cinematography language.

For game developers and narrative designers, the multi-shot generation with consistent characters across cuts is essentially a pre-viz pipeline. You can draft entire cutscene sequences, character reaction shots, and environmental storytelling before a single asset is built in-engine.

And the numbers back this up: global weekly active users jumped 4% in a single week following the Sora shutdown news. That’s organic momentum, not marketing spend.

Try It

The platform: klingai.com — free credits to start, no credit card required for the trial tier.

Pricing tiers:

- Standard — $6.99/mo (commercial license, 1080p)

- Pro — $36/mo (4K, priority queue, Motion Control included)

- API access via Replicate: Kling 3.0 Motion Control on Replicate

The official Motion Control user guide walks you through the full workflow: reference video requirements, output settings, and tips for character consistency. Start there if you want to experiment with motion transfer.

IK3D Lab Take

Kling 3.0 isn’t just “the best AI video tool right now” — it’s the first one that feels like a director’s toolkit rather than a novelty generator. The Motion Control feature alone is worth the subscription price for anyone doing character animation reference work, pre-viz, or interactive narrative prototyping.

Sora’s exit also matters strategically. OpenAI built Sora as a spectacle (and got spectacularly burned by it). Kuaishou built Kling as a tool. The tool won. That’s a lesson the entire AI creative industry should internalize: makers don’t want demos, they want workflows.

The question we’re already asking: what happens when Motion Control gets real-time output? Because then it’s not just reference — it’s a live puppeteering system for digital characters. And that’s the bridge between AI video and interactive 3D that everyone in this space has been quietly waiting for.

Watch this space.