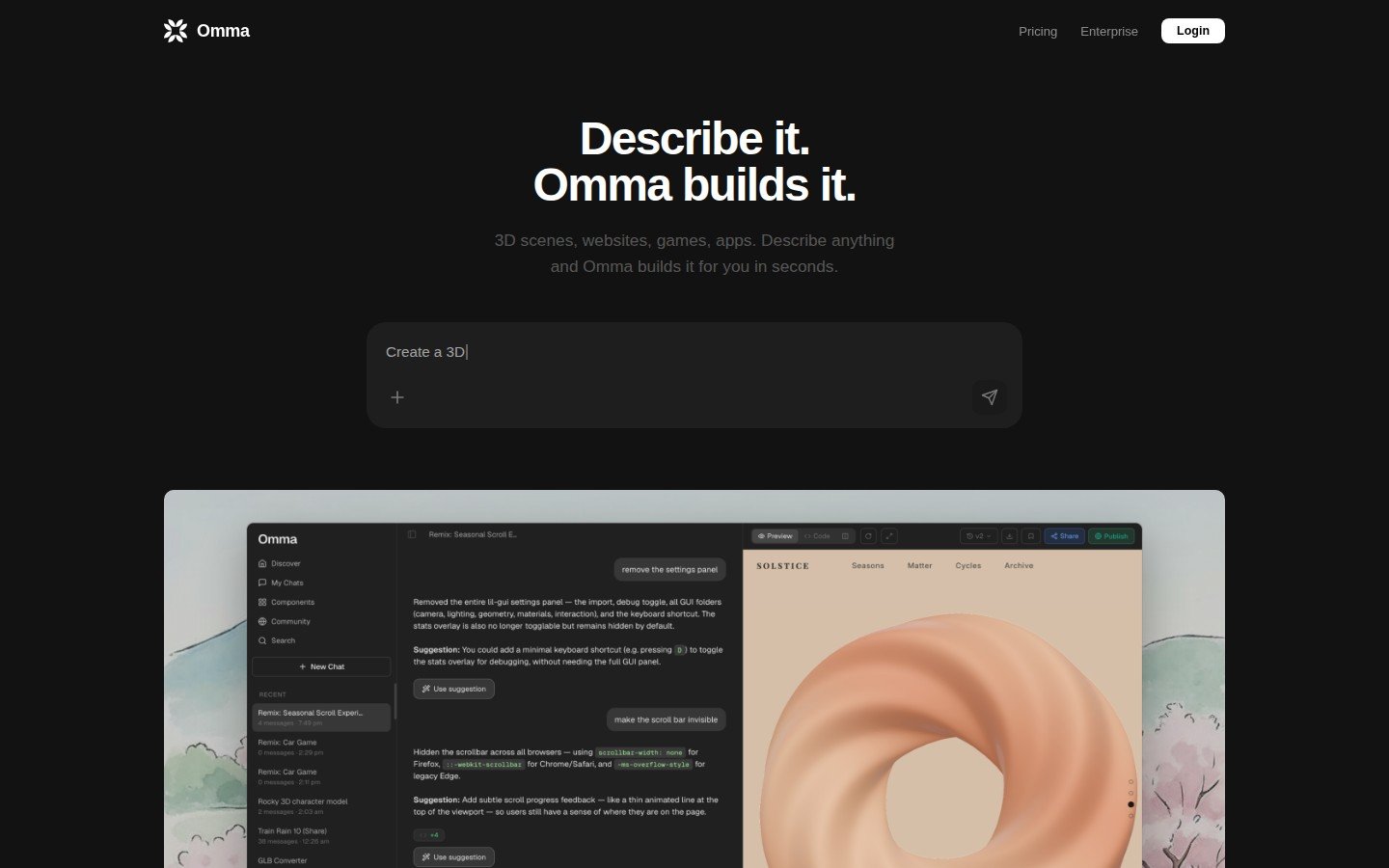

You describe a 3D landing page. Omma builds it — fully interactive, deploy-ready, with real 3D scenes, motion design, and UI — in under a minute. No design experience required. No boilerplate. Just creation.

The Story

On March 24, 2026, Spline launched Omma — a standalone AI canvas that does something no other design tool has managed before: it unifies 3D generation, motion design, animation, and functional UI into a single natural-language workflow.

Spline isn’t a newcomer. Founded in 2020, the company already has 3+ million designers on its platform, including teams at Google, Datadog, Robinhood, and UPS. They’ve been building the browser-based 3D design layer that the web didn’t know it needed — and Omma is their biggest swing yet. $32M raised from Third Point Ventures, Gradient Ventures, and Y Combinator is backing this bet.

Here’s what makes Omma technically interesting: it doesn’t run one AI. It runs three AI agents in parallel. One handles code generation via LLMs. A second manages 3D mesh creation. A third generates images. All three fire simultaneously from your single text prompt. The outputs get assembled, compressed, and optimized for web — including WebGPU acceleration — and you get an editable, production-ready result.

Alejandro Leon, Founder & CEO of Spline, put it bluntly: “What makes Omma a true game changer is flexibility. Creators can fully generate interactive motion design through natural language prompts.” COO Caroline Mack went further: “Omma is as close to magic as it’s ever felt to build digital experiences.”

Why You Should Care

Every AI design tool up to now has the same flaw: it generates mockups. Static images. Isolated components. Things you still have to rebuild in a real tool before they can ship. Omma skips that entirely. What it generates is the thing. Interactive. Functional. Deployable.

For the IK3D audience specifically — 3D artists, game devs, architects, digital creators — this matters enormously:

- 3D artists can now generate browser-native 3D experiences around their work without touching code. Portfolio sites, product showcases, interactive demos — describe it, ship it.

- Architects and designers can prototype interactive presentations, virtual tours, or spatial UI concepts in minutes instead of days.

- Game devs can build live demos, pitch sites, and interactive game world previews without a separate web team.

- Generative artists get an entirely new canvas: 3D web experiences triggered by scroll events, mouse movement, and real-time data feeds.

The technical under-the-hood is also solid. Omma’s 2026 engine uses WebGPU — the successor to WebGL — providing up to 3x faster rendering that holds up on mobile. It accepts 3D files directly (GLB, OBJ, GLTF) alongside images, video, CSV, and JSON. Feed it your own assets and it builds around them. Apple Vision Pro support via Spline Mirror lets you validate spatial designs in real-time on-device.

And it reached #6 on Product Hunt on launch day — so the market noticed.

Try It / Follow Them

Omma is live now at omma.build. There’s a free tier (50 credits/month) that’s enough to experiment seriously. Paid plans start at $39/month for Pro (2,000 credits), scaling to $129/seat for Max.

Spline also ran a Buildathon in partnership with Contra — $15K in prizes for the best things built with Omma. Follow @splinetool and @omma_ai on X for the best community outputs. The level of things people are already building — car games with physics, 16,000-hair physics simulations, full reactive data dashboards — is genuinely surprising for a tool that’s barely two weeks old.

For deep exploration, check Spline AI Generate for the standalone 3D model generation, and the Omma pricing page for credit breakdowns before committing.

IK3D Lab Take

Omma is the first tool that genuinely closes the gap between “I have a 3D idea” and “people can experience it on the web right now.” That pipeline used to require a 3D artist, a web developer, and at minimum a week. Now it can be one person and one afternoon.

The multi-agent architecture is the right call technically — parallelizing code, mesh, and image generation rather than doing them sequentially means the output arrives faster and the individual agents can specialize. The open question is consistency and complexity ceiling: early demos show impressive results for landing pages and showcases, but heavily custom interactive experiences will need more hands-on editing in Spline’s visual tools. That editing layer exists and is mature, so the fallback is solid.

What we’re watching: whether Omma can handle architectural interactivity — parametric spaces, real-data-driven 3D environments, BIM-connected visualizations. If it can ingest industry-standard formats (GLB, GLTF) and connect to live data, it becomes a serious prototyping layer for built-environment visualization. Early signs say yes.

This is one to have open in a tab. Try it. Break it. Push it past landing pages and see what it does with something weird. That’s where the interesting things always are.