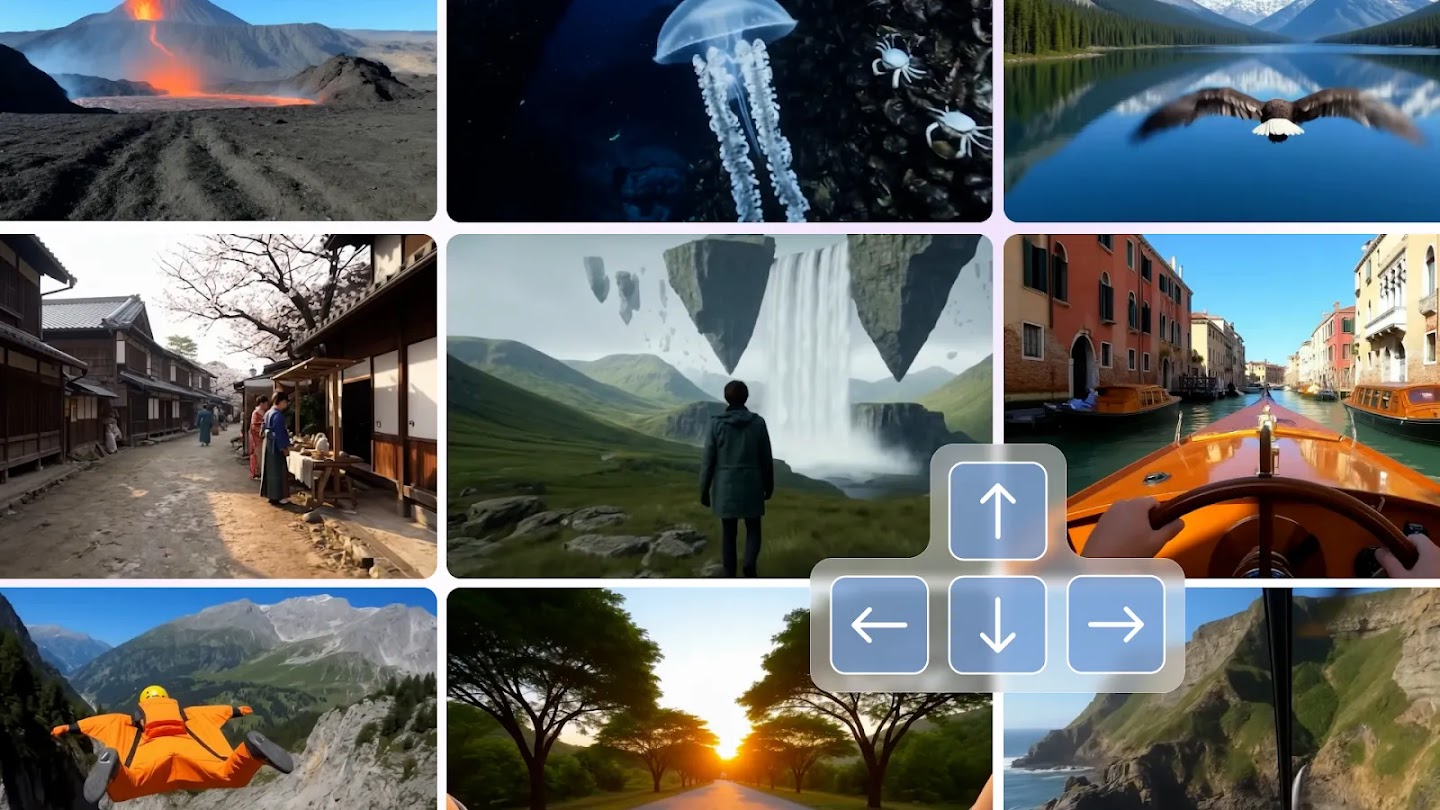

Type a text prompt. Walk inside it. In real time. At 24 frames per second. That’s Genie 3 — Google DeepMind’s world model that doesn’t just generate environments, it lets you live inside them. This isn’t video generation. This is something else entirely.

The Story

Google DeepMind has been quietly building toward this for years. Genie 1 (2024) showed it was possible to generate interactive 2D game environments from a single image. Genie 2 pushed into 3D but was still non-interactive — you watched, you didn’t play. Genie 3, launched in January 2026 and showcased at GDC 2026, blows the doors off both.

The core premise: describe a world in text, and Genie 3 generates it as a navigable, interactive 3D environment — rendered at 720p, 24 fps, with physical simulations running in real time. Water ripples. Light shifts. Weather changes on command. You explore it like a game, not like a video.

What makes this technically wild is how it works. Rather than processing raw pixels, Genie 3 uses a video tokenizer that compresses frames into discrete units, combined with an autoregressive dynamics model that generates the world frame by frame — each frame informed by what came before AND what you’re doing right now. Your movement and text commands are fused into the generation loop in real time. Multiple times per second.

There’s also the “promptable world events” feature — during navigation you can inject new text commands to modify the world on the fly. Change the weather. Introduce a new character. Shift the time of day. The world adapts without restarting the session. This is not a preset environment. It’s genuinely generative, moment to moment.

Why You Should Care

For game developers, this is a paradigm shift in prototyping speed. Describe a dungeon, a city district, a haunted forest — explore it in seconds, iterate on vibes before touching a single engine. You’re not looking at a concept render; you’re walking through a playable sketch.

For world-builders and narrative designers, Genie 3 is a spatial brainstorming tool unlike anything that existed before. You can explore historical periods — ancient Rome, a cyberpunk megacity in 2087, a coastal village in medieval Japan — not as static illustrations, but as traversable environments. The research applications for AI training (embodied agents, robotics simulation) are also massive, and DeepMind is explicitly targeting that use case too.

For 3D artists and architects, think mood boarding in 3D space. The limitations are real (sessions last a few minutes, text rendering inside scenes is shaky, multi-agent interactions are limited), but as a creative exploration layer? It’s already ahead of anything else on the market.

The real kicker: no competitors are doing this yet. Sora, Veo 3, Runway Gen-4 — they all generate video. Genie 3 generates an interactive world. That’s a different category entirely. And DeepMind got there first.

Try It / Follow Them

- Project Genie (early access): labs.google/projectgenie — currently rolling out to Google AI Ultra subscribers in the US. A prompt guide is available on the DeepMind models page.

- DeepMind Genie 3 research page: deepmind.google/models/genie/ — technical details and paper links.

- Google DeepMind blog: deepmind.google/blog — the full announcement with video demonstrations of the system in action.

For now, access is limited to early researchers and Google AI Ultra subscribers (US only). International availability is unannounced. But keep an eye on this one — given the GDC 2026 showcase, a broader rollout seems close.

IK3D Lab Take

We’ve been watching the world generation space closely — World Labs, Genie 2, various NeRF-adjacent experiments. But Genie 3 lands differently. The jump from passive generation to active navigation is the same jump as from cinema to video games. It’s not incremental. And unlike many research papers that are cool but unusable, Project Genie is already live (even if access is restricted).

The limitations are honest and the team documents them clearly: a few minutes per session, imperfect geography, no stable text rendering. But these are the limitations of v1 of a genuinely new category of tool. For the IK3D Lab audience — game devs, 3D artists, architects, worldbuilders — this is the one to track. Not next year. Now.

The pipeline that leads from Genie 3 to full real-time navigable AI worlds is short. And DeepMind is running it.