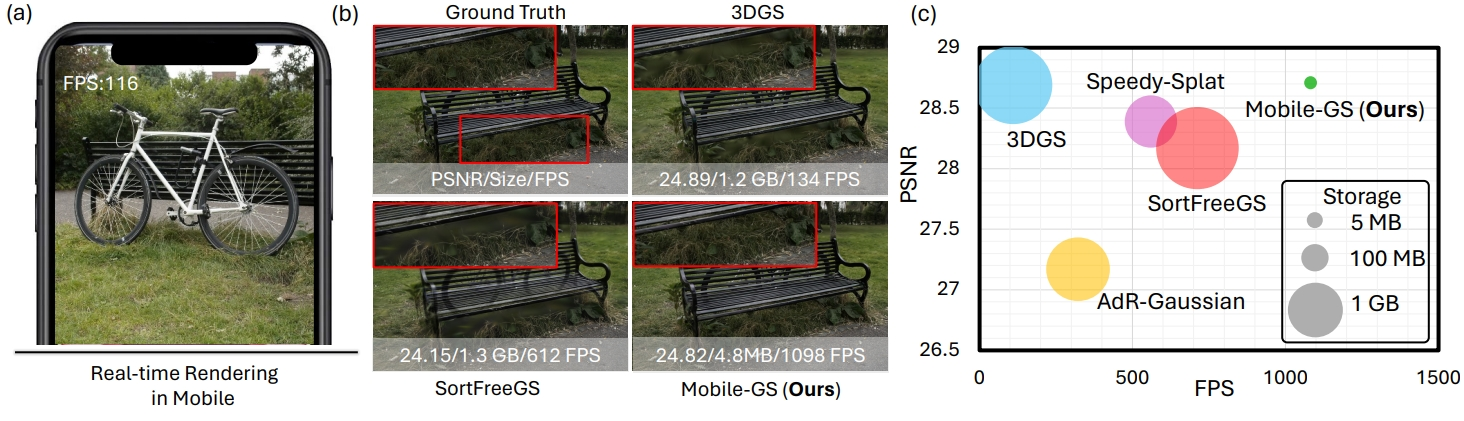

Until now, Gaussian Splatting meant beefy GPU. Server-side rendering. Desktop-only viewers. As of ICLR 2026, that’s over. Mobile-GS runs photorealistic 3DGS at 116 FPS on a Snapdragon 8 Gen 3 — and fits inside 4.8 MB.

The Story

3D Gaussian Splatting has been one of the biggest breakthroughs in photorealistic 3D rendering over the last two years. Capture a scene with a phone, process it overnight, and you get a stunningly detailed 3D representation you can navigate in real time. The catch? You needed a discrete GPU to view it at anything resembling a usable frame rate. Deploying to mobile? Forget it.

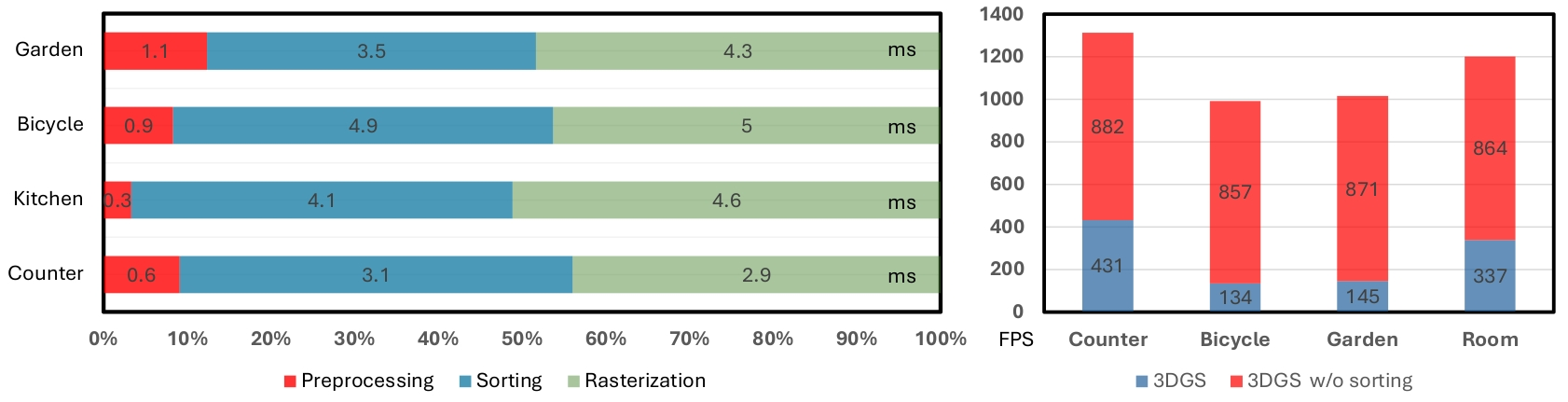

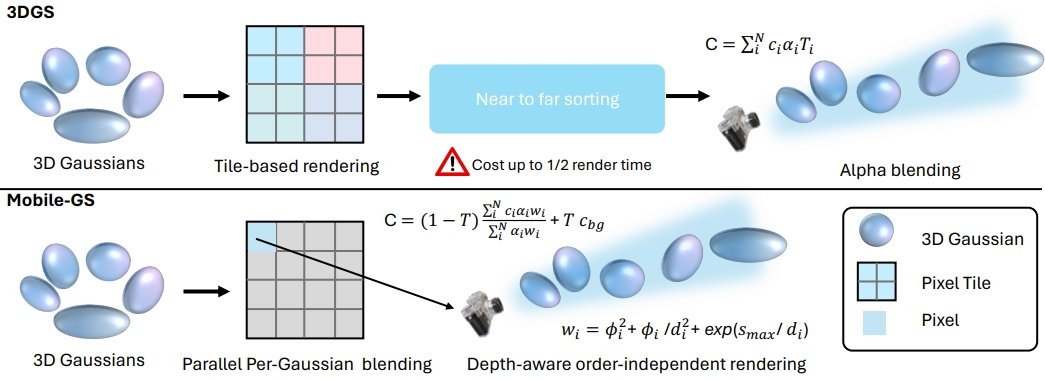

Mobile-GS, published at ICLR 2026 by researchers from the University of Technology Sydney, Adelaide University, and Li Auto, just killed that barrier. The paper’s core insight is deceptively clean: the reason 3DGS is so expensive on mobile is the depth sorting. Every frame, the renderer has to sort millions of Gaussian primitives by depth before alpha-blending them. On a phone GPU, that’s a catastrophic bottleneck.

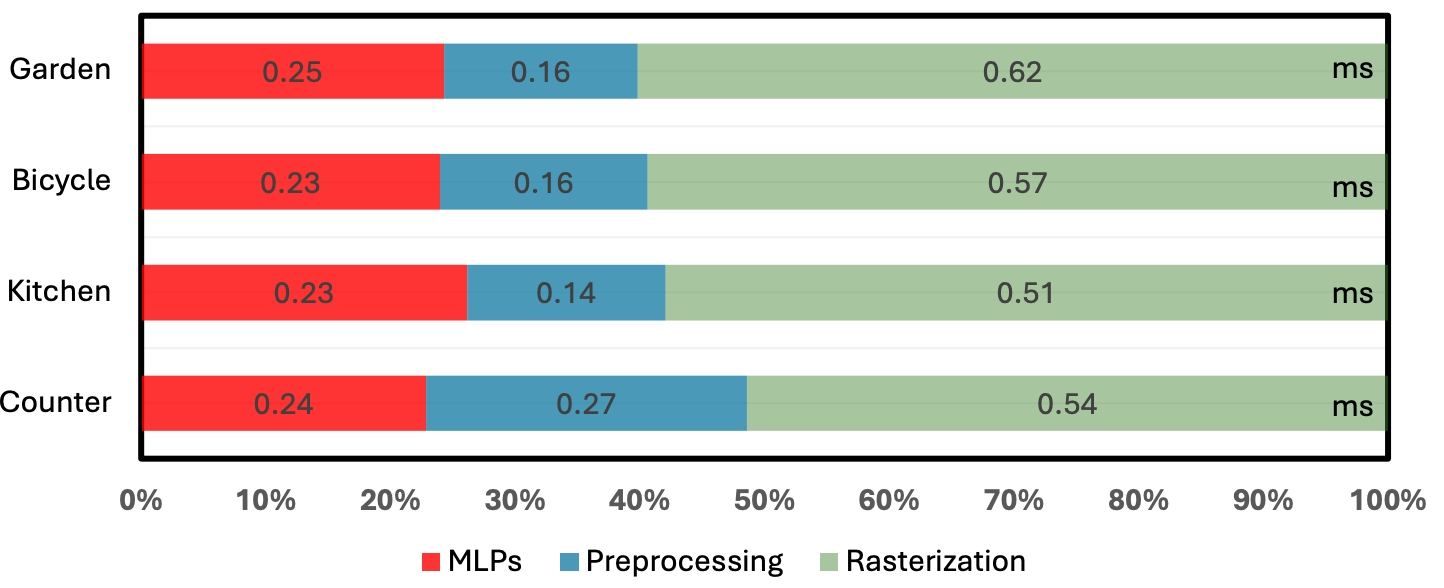

Their solution: depth-aware order-independent rendering. Instead of sorting Gaussians before rendering, they redesign the rendering scheme so sorting is never needed. Then, to handle the transparency artifacts this would normally introduce, they add a neural view-dependent enhancement layer — a lightweight network that corrects appearance based on viewing direction and geometry. No sorting, no artifacts.

The storage problem gets attacked with equal aggression. Original 3DGS models weigh hundreds of megabytes. Mobile-GS deploys three compression techniques in combination: first-degree spherical harmonics distillation (knowledge distillation from a full SH model), neural vector quantization of Gaussian attributes, and contribution-based pruning that removes low-impact Gaussians. The result: a scene that would normally take 200–400 MB fits in 4.8 MB.

The Numbers Are Absurd

Let those sink in for a second:

- 116 FPS at 1600×1063 resolution on Snapdragon 8 Gen 3 (that’s your 2024–2025 flagship Android phone)

- 1098 FPS on unbounded outdoor scenes on an RTX 3090

- 4.8 MB average model size

- Visual quality comparable to original 3DGS — not degraded, comparable

- Real-time rendering on mobile at quality that beats every previous lightweight GS method

This isn’t a theoretical demo. The paper benchmarks on standard 3DGS datasets (Mip-NeRF 360, Tanks and Temples, Deep Blending) and the results are consistent across scene types — indoors, outdoors, bounded, unbounded.

Why You Should Care

Think about what this actually unlocks:

For architects and visualization studios: You scan a site or interior, process the splat, and your client can explore it photorealistically on their iPhone — no app install beyond a web viewer, no server-rendered stream, no compromise on quality. Real-time photorealistic walkthroughs in a 5 MB file. That’s a pitch deck that sells itself.

For game developers: Gaussian Splatting as a rendering primitive in mobile games just became viable. Character capture, environment scanning, cutscene delivery — a 100 MB game asset budget used to mean no splats. Now a full scene fits in under 5 MB. This changes what’s possible on mobile game graphics, particularly for mixed-reality and AR titles.

For digital artists and content creators: Photogrammetry apps on iPhone already capture decent splats. Until now, sharing them meant either a low-quality compressed version or forcing the viewer onto a desktop. Mobile-GS is the rendering backend that makes native mobile splat viewers properly good. Expect apps built on this within months.

For XR developers: Standalone headsets — Quest, Vision Pro, future devices — are effectively mobile hardware. Real-time 3DGS at 116 FPS on Snapdragon 8 Gen 3 means the headset tier now runs splats. Photorealistic AR overlays, on-device 3D capture, instant scene reconstruction — all of this is now in the performance budget.

Try It / Follow Them

- Official project page — demo videos showing live mobile rendering

- arXiv paper (2603.11531) — full technical details

- GitHub repo — code (releasing soon per the paper)

- OpenReview page — ICLR 2026 reviews and author responses

The code isn’t fully public yet as of this writing, but the GitHub repo is live and the authors mention a release is coming. Watch it.

IK3D Lab Take

We’ve been covering Gaussian Splatting since its explosion onto the scene — NanoGS in Unreal, Apple LGTM for single-image splats, OpenUSD standardization, Arcturus 4D splatting. Each of those moved the needle. Mobile-GS feels different. It’s not a workflow improvement or a toolchain integration. It’s a hardware ceiling being demolished.

The creative constraint has always been: Gaussian Splatting is GPU-hungry, so it lives on desktop. That assumption just expired. When the rendering backend can live on a Snapdragon, the deployment surface for 3DGS explodes — apps, games, AR, architecture pitches, product visualization, social 3D. The whole pipeline shifts.

If you’re building anything in the 3D capture or visualization space, you need to be aware that your target hardware just got a massive upgrade. The scene you scanned last week? It can render at 116 FPS on a phone. Plan accordingly.