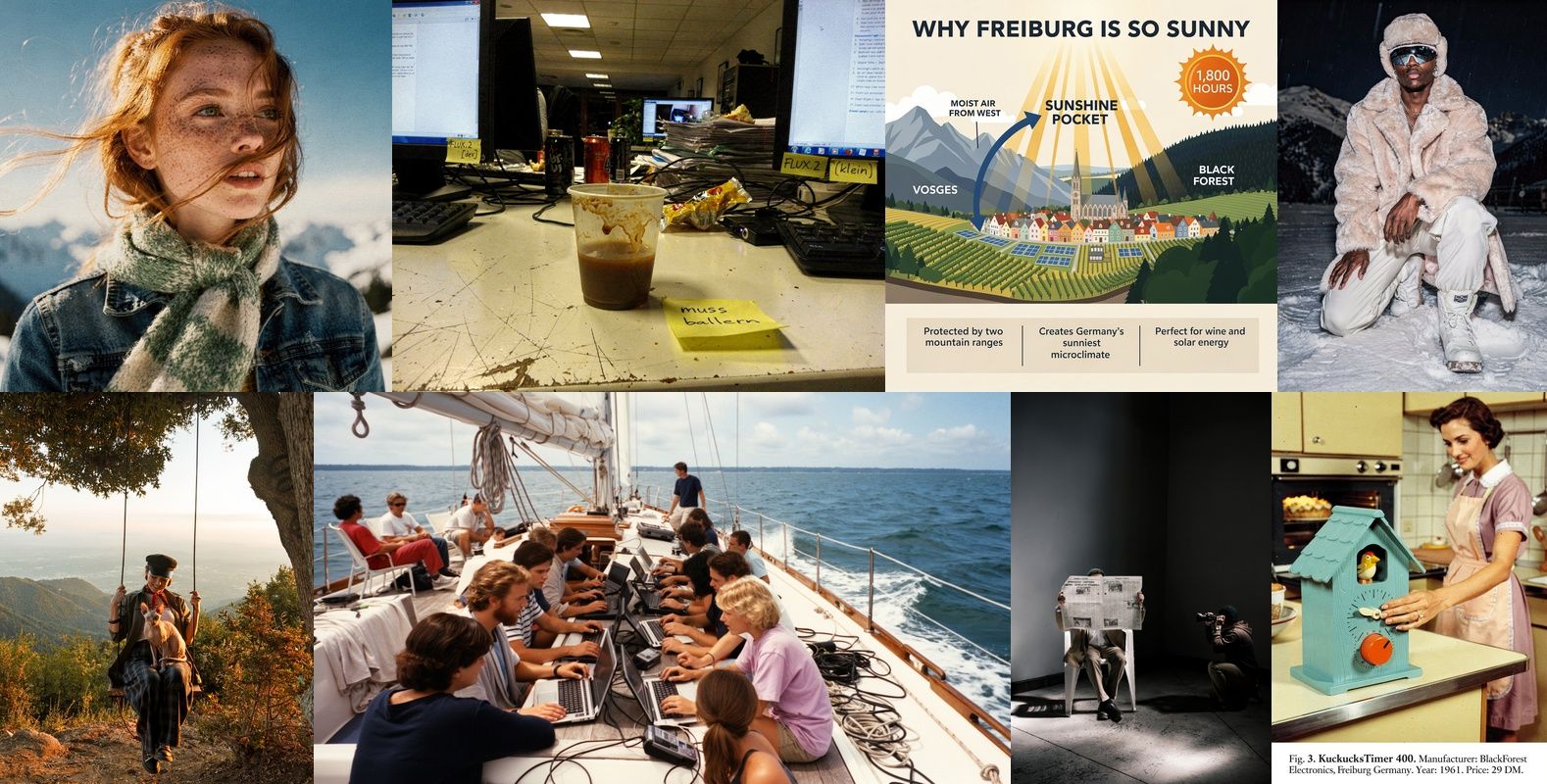

Black Forest Labs just dropped FLUX.2 — and if you’ve been using FLUX.1 for your creative pipeline, this isn’t just an update. It’s a rebuild. Native multi-reference, 4-megapixel output, sub-second generation on consumer GPUs, and day-0 ComfyUI support. The image generation game just moved again.

The Story

Black Forest Labs — the team that gave us FLUX.1, the open-source model that genuinely threatened Midjourney’s grip on image quality — released FLUX.2 in late 2025, with more features and open-source variants dropping into early 2026. The architecture is fundamentally different: FLUX.2 combines a Rectified Flow Transformer with an integrated Mistral-3 24B vision-language model. That VLM integration is the big deal — it means the model understands images at a much deeper level before generating. Better spatial logic, better lighting physics, better hands and faces, better text.

The full FLUX.2 [dev] model is 32 billion parameters, open-weight on Hugging Face. But the real story for most creators is the FLUX.2 [klein] variants — 4B and 9B models distilled from the full FLUX.2, generating images in under 0.5 seconds in just 4 steps. The 4B variant is fully open under Apache 2.0 and runs in ~13GB VRAM, meaning the RTX 3090 and 4070 crowd can play for free.

Why You Should Care

Three features make FLUX.2 a must-upgrade for creative workflows:

1. Multi-Reference — No Fine-Tuning Required

FLUX.1’s biggest limitation for character consistency and product work was that you needed LoRAs and fine-tuning hacks to keep subjects coherent across images. FLUX.2 bakes this in natively. You can reference up to 10 images simultaneously — drop in your character shots, product angles, or style references, and the model maintains consistency across outputs. For 3D artists creating concept sheets, comic creators building character bibles, or product designers iterating on visuals — this is genuinely transformative. No more spending hours training a LoRA for every new character.

2. 4-Megapixel Native Output

FLUX.1 topped out at 1MP in practical use. FLUX.2 generates natively at 4 megapixels — that’s roughly 2048×2048 or 2560×1536 depending on aspect ratio — with real-world lighting physics baked in. Skin pores, fabric weave, material reflections. The model has been retrained with a new latent space that has dramatically better learnability for fine detail. Compare it to FLUX.1: same prompt, night and day difference in material quality.

3. Text Rendering That Actually Works

AI image models have been famously terrible at text. FLUX.1 was better than SD, FLUX.2 is another leap forward. Complex typography, multilingual text, infographic layouts, UI mockups — the model handles them with a precision that makes Photoshop-level text compositing feel like a thing of the past.

Try It

Several ways to get hands-on immediately:

- ComfyUI — FLUX.2 [dev] and [klein] have day-0 support. Update your ComfyUI installation, grab the model from Hugging Face (black-forest-labs/FLUX.2-dev), and use the built-in workflows. NVIDIA’s FP8 optimizations cut VRAM by 40% — practical on 24GB cards, and [klein] runs on 13GB.

- BFL Playground — The fastest way to test multi-reference is the Black Forest Labs playground — upload your reference images and see the consistency in action with no setup.

- API (FLUX.2 [pro]) — For production pipelines, the [pro] variant is accessible via the BFL API. Replicate also hosts [klein] variants for easy integration.

- Hugging Face Spaces — Free testing with queues on HF Spaces, good for first impressions before committing to a local setup.

If you’re on an RTX 3090 or 4090 and already running FLUX.1 workflows, the upgrade path is clean. The [klein] 4B open-source model is honestly remarkable for its size class — it beats FLUX.1-schnell in quality at a fraction of the compute.

IK3D Lab Take

FLUX.2 is the image generation upgrade we’ve been waiting for since FLUX.1 launched. The native multi-reference feature alone changes the economics of character-consistent creative work — no more LoRA training overhead, no more juggling separate tools for style consistency. For 3D artists, the 4MP output with proper material rendering opens up reference image generation as a real part of the pipeline (think: generating consistent character concept sheets to use as reference in Blender or Cinema 4D). For comic and sequential art creators, character consistency without fine-tuning is the unlock they’ve been asking for.

The [klein] open-source release is also a significant move — a 4B Apache 2.0 model that generates in under half a second with multi-reference support is an infrastructure play. Expect to see it embedded in local tools, Blender add-ons, and ComfyUI nodes very quickly. Black Forest Labs keeps shipping, and that’s exactly what the open creative community needed.

Links: FLUX.2 Official | HuggingFace (dev) | HuggingFace (klein 4B) | ComfyUI Guide | NVIDIA RTX Optimization