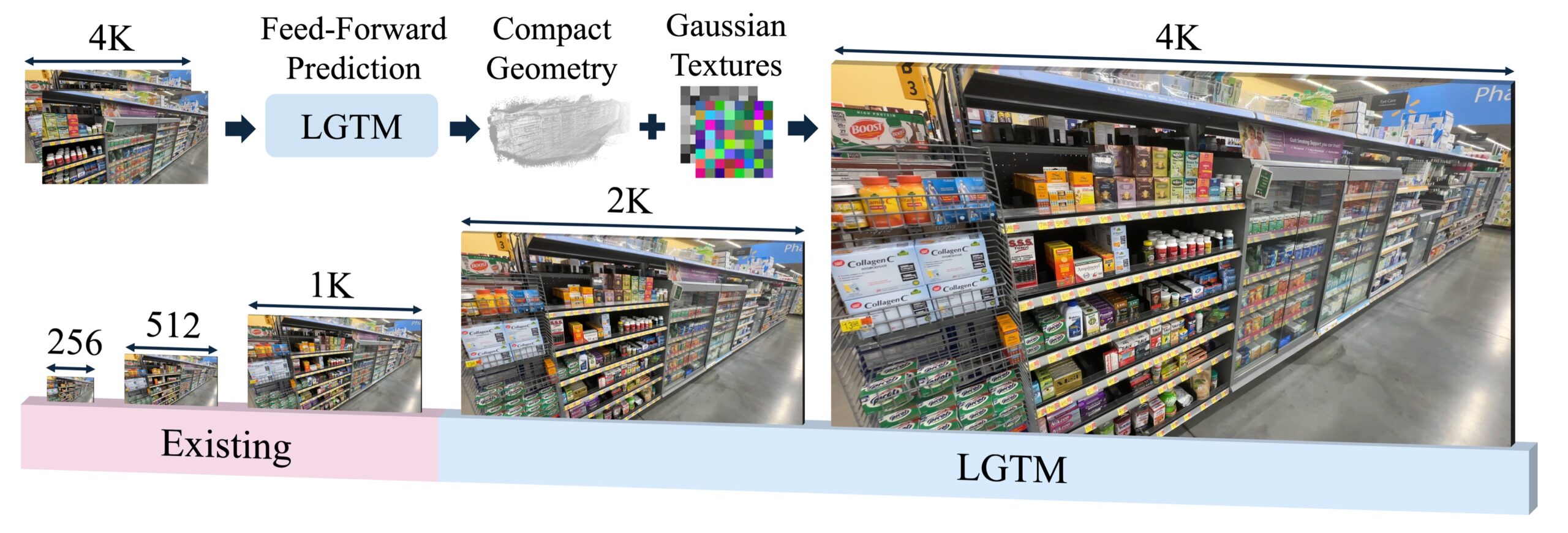

Feed-forward Gaussian splatting has been stuck at a hard ceiling: push resolution past 1080p and memory explodes. Apple just published a paper at ICLR 2026 that breaks through that ceiling — and reaches 4K without even flinching.

The Problem That Capped 3DGS at 1080p

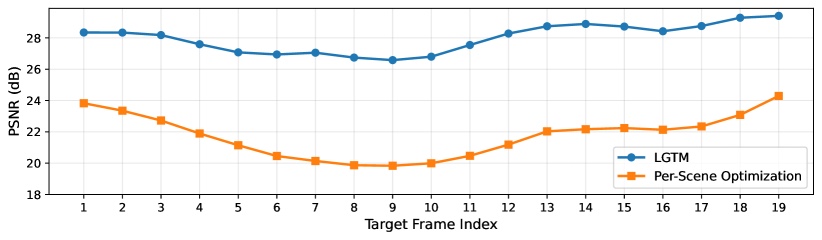

3D Gaussian Splatting has taken over the novel view synthesis world. Fast, high-quality, real-time — everyone loves it. But feed-forward methods (the ones that reconstruct a scene in a single pass, no per-scene training) have a dirty secret: they predict one Gaussian primitive per pixel. Go from 1080p to 4K and you go from ~2 million to ~8 million primitives. Memory explodes quadratically. Methods like NoPoSplat literally crash with OOM errors at 2K.

This isn’t a hardware limitation. It’s a fundamental architectural problem baked into how pixel-aligned Gaussian prediction works. Until LGTM, nobody had a clean fix.

What LGTM Actually Does

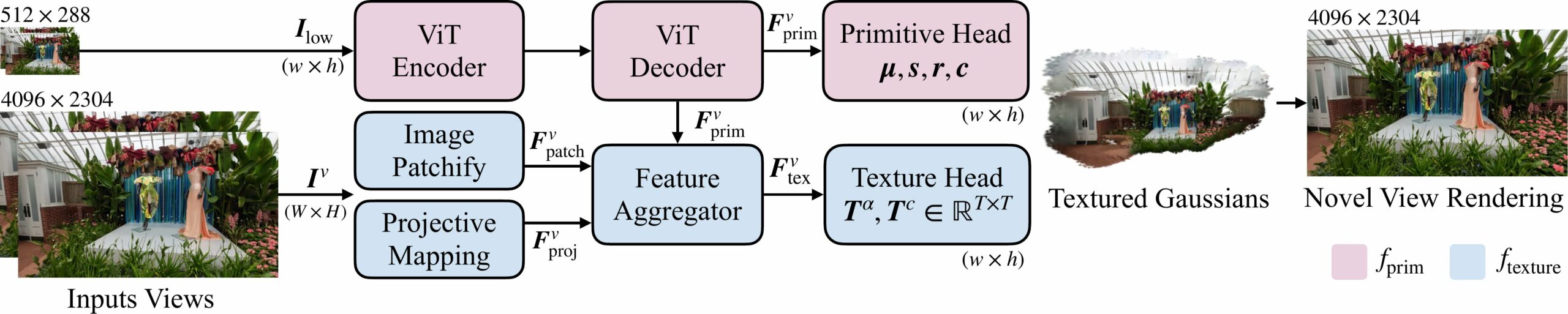

LGTM stands for Less Gaussians, Texture More. The insight is elegant: if you decouple geometry from appearance, you can stop scaling the number of Gaussians with resolution. The system has two networks working together:

- Primitive Network: Takes a low-resolution input and predicts a compact set of 3D Gaussian primitives — far fewer than pixel count

- Texture Network: Takes a high-resolution input and attaches a small RGBA texture map to each Gaussian primitive

The geometry stays lightweight. The detail lives in the texture maps. When you rasterize, each Gaussian gets sampled based on viewing angle and composited in depth order — you get sharp 4K output without a 4K-sized point cloud.

The Numbers Are Wild

Going from 512×288 to 4096×2304 is a 64× increase in pixel count. With pixel-aligned methods, that means 64× more Gaussians. With LGTM, it costs 1.80× more memory and 1.47× more inference time. That’s it. That’s the whole trade-off. You get 4K for almost free.

LGTM trains at 2K and 4K using under 30 GB of VRAM. NoPoSplat cannot even complete training at 2K due to OOM errors. The gap is not marginal — it’s a different category.

And it’s not niche. LGTM works across all the major feed-forward scenarios:

- Single-view (replaces Flash3D)

- Two-view, pose-free (beats NoPoSplat)

- Two-view, posed (improves DepthSplat)

- Multi-view (works with VGGT)

Why You Should Care

Right now, most real-time 3D reconstruction tools cap their output at 720p or 1080p — not because 4K is hard to display, but because generating it costs too much. Splat captures of environments, objects, people — all of it stays low-res in practice.

LGTM points toward a world where:

- AR/VR headsets can reconstruct spaces at native display resolution in real time

- Object scanning tools generate production-grade splats from a handful of photos

- Instant 3D from single images stops looking like it came from 2020

- Video-to-3D pipelines can output 4K frames without a render farm

This is Apple ML Research, so it also signals that Apple is thinking hard about 4K Gaussian splatting in real-world device contexts — Vision Pro being the obvious one. The timing is not a coincidence.

Try It / Follow It

The code isn’t public yet — the repo shows “coming soon” at the time of writing. But you can:

- Read the full paper on arXiv: 2603.25745

- Watch the video comparisons on the official project page

- Follow lead author Yixing Lao on GitHub (@yxlao) — code drops there first

- Check the Apple ML Research page for updates

IK3D Lab Take

This is exactly the kind of paper that doesn’t get enough hype because it’s “just infrastructure.” No flashy app, no viral demo reel, no product launch. Just a research team at Apple quietly solving the thing that was blocking 3DGS from reaching its full potential.

When the code drops, expect to see it folded into every serious splat pipeline within months. Feed-forward 4K reconstruction is about to become the baseline expectation. LGTM is the reason why.

Keep an eye on this one.