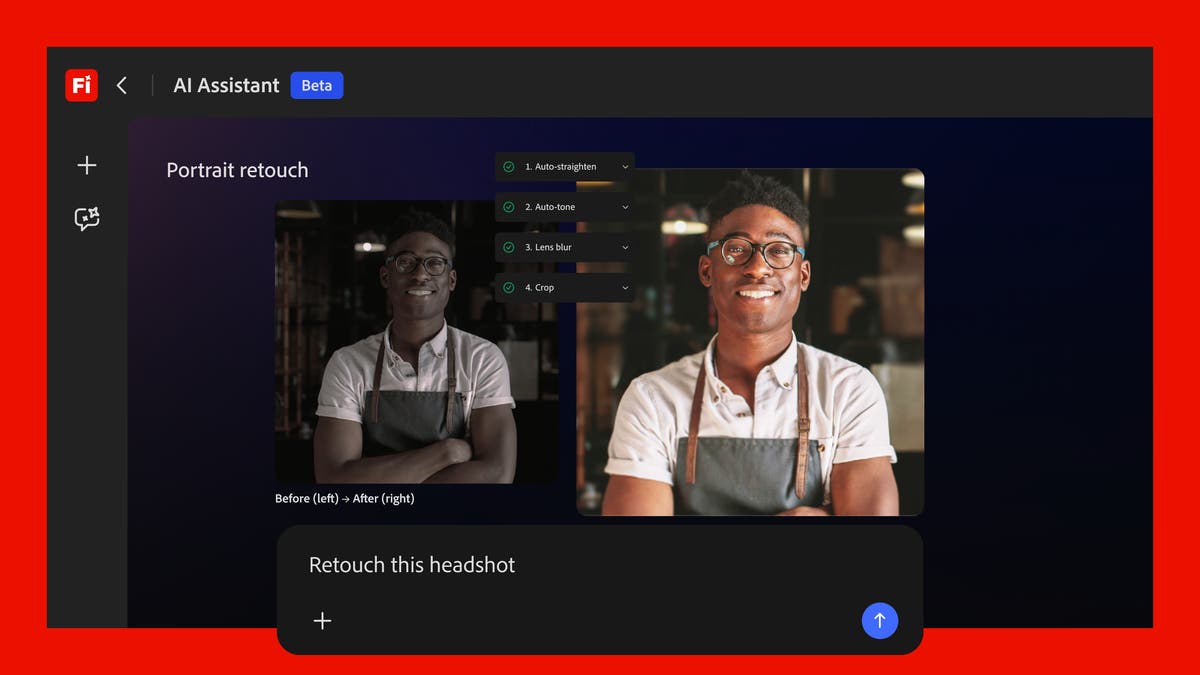

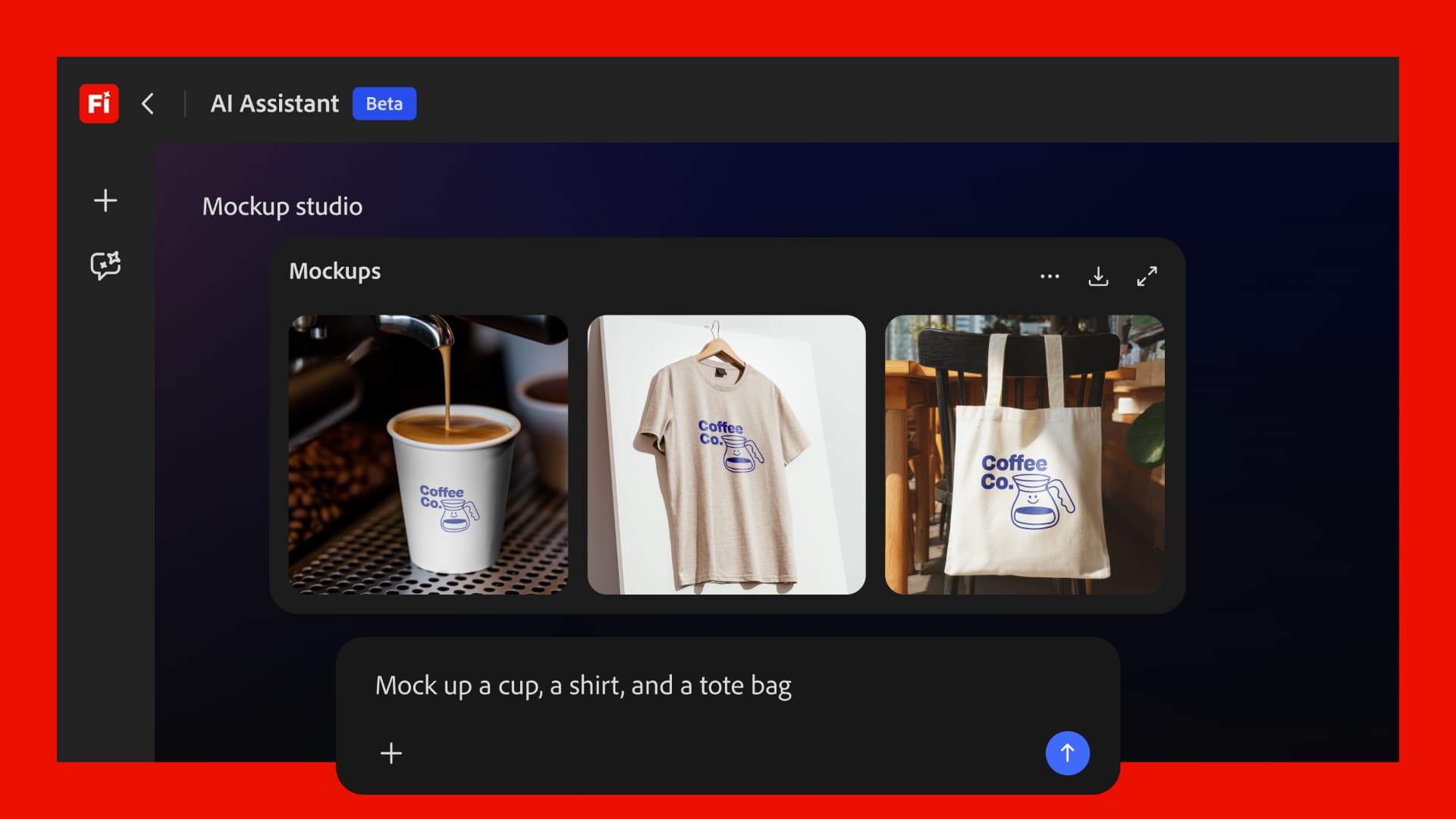

Adobe just turned your Creative Cloud subscription into something it was never supposed to be: an AI that thinks across apps. Firefly AI Assistant — the official name for what Adobe was calling Project Moonlight — dropped at NAB 2026 on April 15, and it’s not a chatbot. It’s a creative agent that actually does the work.

The Story

For years, Adobe’s apps have been powerful silos. You jumped from Lightroom to Photoshop to Premiere, copy-pasting, re-exporting, manually bridging each tool to the next. Tedious, yes — but also weirdly accepted as “just how it works.” That model just broke.

Firefly AI Assistant is the missing orchestration layer. From a single conversational prompt, it can:

- Pull a raw photo from Lightroom and apply a specific editing style

- Generate three aspect-ratio variants in Photoshop with Generative Extend

- Create matching social media graphics in Express (Instagram, Facebook, TikTok)

- Deliver all assets to collaborators via Frame.io for review

One thread. No app switching. You describe the outcome, the agent handles the routing.

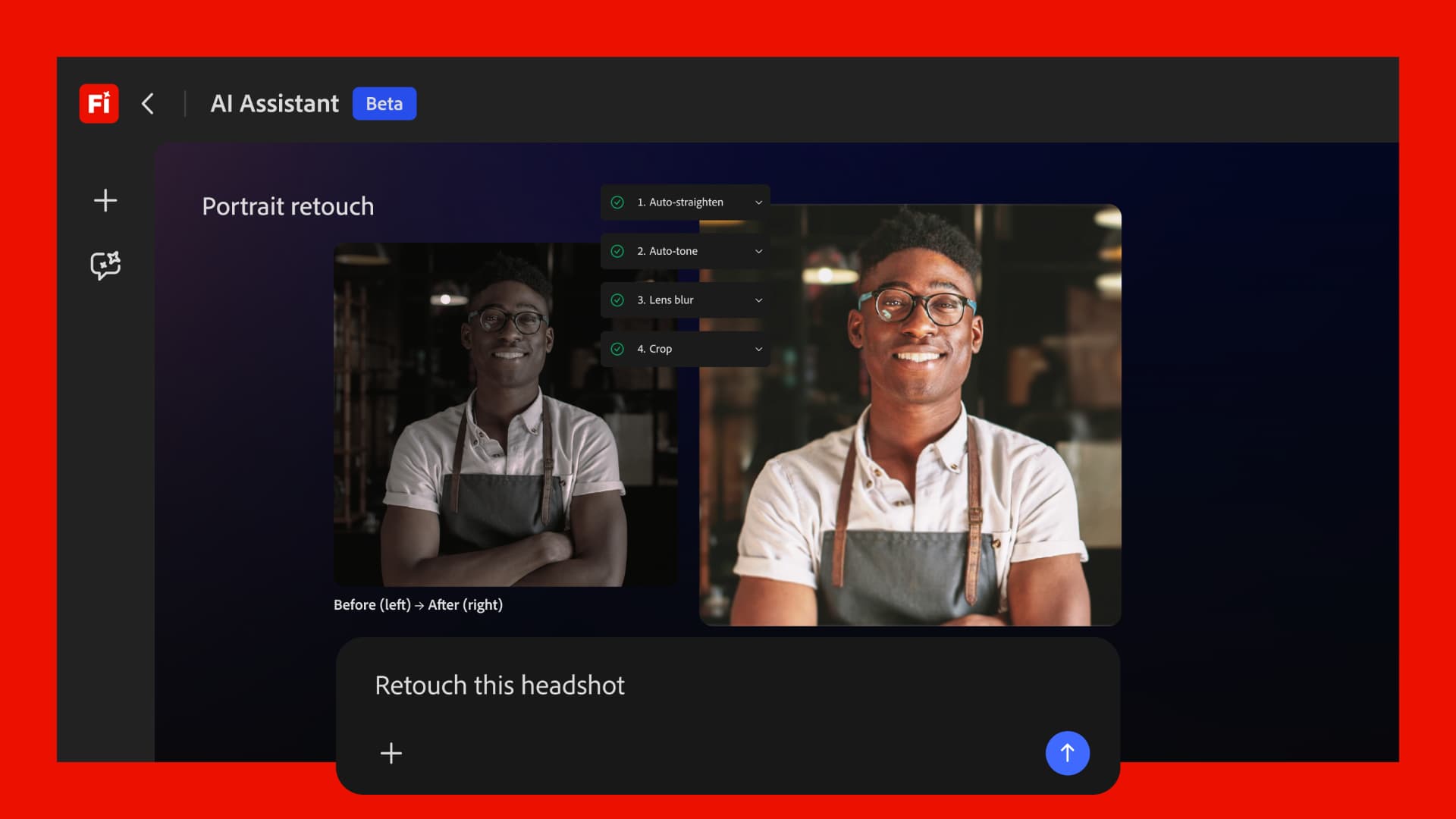

This is the Creative Skills model — pre-built multi-step workflows you trigger with natural language, then customize or extend to fit your style. Think of them as macros, but smart. Adobe previewed this at Adobe MAX in October 2025 under the codename “Project Moonlight.” Now it has a real name, a public roadmap, and a beta incoming.

The timing matters. Adobe chose NAB 2026 — the broadcast and video production trade show — to drop this, alongside a batch of video-specific announcements: Firefly Video Editor now includes Enhance Speech (Adobe Podcast’s noise reduction tech), direct access to 800+ million Adobe Stock assets without leaving the editor, and two new video models — Kling 3.0 and Kling 3.0 Omni. The total model roster inside Firefly is now 30+, including Runway Gen-4.5, Veo 3.1, and Adobe’s own Firefly Image Model 5 (now GA).

There’s also a third-party signal that shouldn’t get lost: Adobe confirmed it’s building Firefly AI Assistant integrations for Anthropic’s Claude and other external models. So this isn’t just Adobe-land. The agent can pull in capabilities from outside the ecosystem.

Why You Should Care

If you’re a 3D artist or creative technologist working inside Adobe’s ecosystem, here’s the angle that hits closest to home: Firefly just added Rotate Object — a feature that converts 2D images into 3D, letting you reposition objects and people in different poses and rotate them to show different perspectives. This isn’t Tripo or Step1X-3D-level mesh generation, but it’s Adobe-grade, licensed, and works on any reference photo you already have.

The bigger deal is the agentic model itself. If you run a solo or small studio — renders, architecture viz, product presentation, motion graphics — you know the last-mile pain: adapting deliverables for clients, formatting assets for platforms, prepping reference libraries. Creative Skills maps directly to that grind. It doesn’t replace your creative decisions; it executes the operational scaffolding around them.

And Kling 3.0 inside Firefly is quietly a big deal too. Until now, getting serious AI video generation meant juggling APIs, separate tools, and licensing headaches. With Kling 3.0 inside Firefly (up to 5-minute generation, 4K output, image-to-video), you have best-in-class video gen baked into the Adobe workflow — fully licensed, no external subscriptions required.

Try It / Follow It

- Firefly AI Assistant public beta: Coming in the next few weeks — watch firefly.adobe.com for access

- Adobe Summit 2026: April 19–22, Las Vegas — live demos and deeper feature reveals expected

- Official announcement: Adobe Blog — Introducing Firefly AI Assistant

- Press release: Adobe — New Creative Agent and Generative AI Innovations

- NAB 2026 coverage: RedShark News | TechCrunch | VentureBeat

IK3D Lab Take

We’ve been watching agentic AI transform coding, writing, and research — and wondering when creative production would get the same upgrade. Adobe just made its move. The Firefly AI Assistant is the most ambitious thing Adobe has shipped in years, and the timing with NAB is smart: they’re planting the flag in the professional video and production community before the feature even ships.

Will it live up to the demo? Cross-app orchestration is notoriously hard to get right, and Adobe has a mixed record with beta promises becoming reality. But the direction is undeniably correct. Creative Skills, if they work reliably, don’t just save time — they change what’s possible for a solo creator or small team. One person with a well-tuned agent stack can produce at studio scale. That’s not hype. That’s just what happens when the boring operational layer finally gets automated.

Public beta imminent. Adobe Summit April 19–22 is the next signal. Watch closely.