If you’ve been watching the AI 3D generation space, you already know the names: Meshy, Rodin, Tripo, Hunyuan3D, TRELLIS. Most of them are closed-source, API-gated, and hiding behind paywalls. That’s the normal. Then StepFun drops Step1X-3D — fully open-source, state-of-the-art results, and benchmarks that beat or match the proprietary juggernauts. This is the open-source moment the 3D generation space has been waiting for.

What Is Step1X-3D?

Step1X-3D is a two-stage AI framework for generating high-fidelity, textured 3D assets from a single image. Built by StepFun AI, it was released open-source in May 2025 and has since been progressively updated with training code, data preprocessing pipelines, and multi-view generation capabilities.

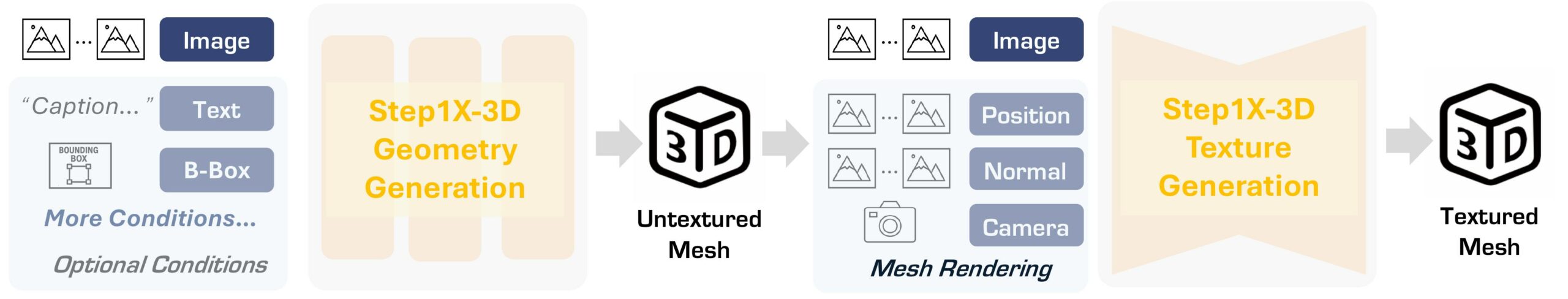

The pipeline works in two stages:

- Geometry Generation: A hybrid VAE-DiT model takes your input image and generates a watertight TSDF (Truncated Signed Distance Function) mesh via marching cubes. The perceiver-based latent encoding with sharp edge sampling preserves fine details that other methods blur over.

- Texture Synthesis: An SD-XL-fine-tuned multi-view generator wraps that geometry in photorealistic (or stylized) textures, conditioning on the input image and ensuring cross-view consistency via latent-space synchronization.

The result: watertight meshes with clean topology and textures that actually match the reference image.

The Data Story Is Actually Impressive

Most 3D generation papers gloss over data. Step1X-3D doesn’t. The team built a rigorous curation pipeline that processed over 5 million raw 3D assets to produce a clean dataset of 2 million high-quality assets. They’ve released 800K UIDs of these curated assets publicly on Hugging Face — a genuine gift to the research community.

Sources: Objaverse (320K validated samples), Objaverse-XL (480K), ABO, 3D-FUTURE, and internal data. That’s not a toy dataset — that’s a serious industrial-scale data pipeline made available for free.

Why This Matters for Creators

Here’s the kicker: you can apply LoRA fine-tunes from 2D to 3D synthesis. The SD-XL backbone means the entire ecosystem of 2D LoRAs — character styles, art styles, material presets — can transfer directly to your 3D texture generation. If you’ve been building a consistent character style in Stable Diffusion, Step1X-3D can inherit that identity into 3D.

The controllability options:

- Symmetry control: Generate symmetric or asymmetric geometry from the same input — great for characters, vehicles, props

- Surface sharpness: Sharp, normal, or smooth geometry modes — mechanical hard-surface or organic forms

- Style transfer: Cartoon, sketch, photorealistic — all from the same geometry pass

Compare that to Meshy or Tripo, where you basically get what you get. Step1X-3D puts the art direction back in your hands.

Benchmarks: Where Does It Stand?

Step1X-3D exceeds all existing open-source methods on standard 3D generation benchmarks and achieves competitive quality with commercial solutions like Meshy-4, Rodin v1.5, and Tripo v2.5. The geometry is genuinely cleaner — fewer artifacts, better edge definition, more coherent UV mapping.

Is it perfect? No. Complex hairstyles and thin appendages are still challenging. But for props, environments, vehicles, and most organic characters, the output quality is remarkable for a fully open model.

How to Run It

- Zero install: Try the live Hugging Face demo — free, instant, no GPU needed

- Local GPU: Clone the GitHub repo and run inference with the 1.3B geometry model. Needs 16GB+ VRAM

- Fine-tune it: Full training code is available — train your own domain-specific 3D generation model

- ComfyUI: A ComfyUI node is on the roadmap — when it lands, this becomes accessible to the entire ComfyUI ecosystem overnight

The Open Source Angle: Why It Changes the Game

The commercial 3D AI tools are converging toward walled gardens: API credits, subscription gates, output licenses that restrict commercial use. Step1X-3D is the counterweight. Fully Apache-licensed (models + training code + data), game studios, indie devs, architects, and VFX houses can build on top of it without legal landmines.

A game studio could fine-tune Step1X-3D on their internal art style and generate unlimited on-brand 3D assets. An architecture firm could train it on their material library and produce concept models from sketches. That infrastructure now exists — openly — for the first time at this quality level.

What’s Next

The StepFun team has been shipping updates steadily since May 2025. On the roadmap: multi-view conditioning, bounding-box control, skeleton-guided generation, and the promised ComfyUI node. If the pace continues, Step1X-3D could become the Stable Diffusion of 3D generation — the open foundation everything else builds on.

The 3D generation space needed this. Rodin talks to your models, Meshy generates game arsenals, TRELLIS does voxels — and now Step1X-3D gives the whole ecosystem an open, controllable foundation to build from. Keep watching this one.

Links: GitHub | Live Demo | Technical Report | Project Page